|

|

||||

|

By Jo Nova

Walking in the Valley of Political Death, after Trump, Farage, and One Nation took all the risks and paved the way out of the Climate Swamp, the Liberals have finally been dragged into saying a definite “No” to Net Zero, including repealing the toxic Safeguard Mechanism. What they haven’t done as a party is show leadership. A few brave souls in the party have spoken out (like Andrew Hastie and Alex Antic) but the official Liberal policy, as explained last November, is still that lowering emissions is a worthy thing for no good reason other than being pagan weather controlling witchcraft. Do the Liberals still think that they should pander to the Paris agreement blob? That’s what they said last year. The thing about “playing it safe”, as the Liberals have done, is that they were waiting for The People to figure out Net Zero was a pile of voodoo before they would risk being called names by the teenage girly monsters. The problem is that once the people realize Net Zero is an international parasite thriving on grift and graft, it’s about a nanosecond before they want a real leader who will take a blow-torch to the parasites. In that nanosecond they flip to the party of true leaders — the ones who took a position based on principle and led the way. Until the Liberals take some risks and face down the namecalling vipers, the voters won’t believe they have the mojo to take on the whole cartel of Blob Bankers, Blob Bureaucrats, vested Blob industries and foreign interests who depend upon our climate-patsy compliance with the fantasy. The Liberals need to start to sell the absurdities of “Net Zero” with conviction. The way to win the Teal seats is not to pander to the fantasy, it’s to mock it mercilessly. Every day the Liberals wait for the polls to shift they are that much closer to extinction. It was a good speech, but it could have been a great one… Extracts of Angus Taylors’s Budget Reply SpeechSecond, prioritising net zero and emissions reduction above all else has seen cheap, always-on power dismissed for expensive, sometimes-on, industrial-scale renewables – mainly sourced from offshore. Power prices have soared – causing households to struggle, businesses to close, and industries to move offshore. Far from a future made in Australia, our future is being made abroad. Australians have been fed the lie that our economy can function on solar, wind, and batteries alone. But the truth is fossil fuels continue to drive our economy and our prosperity. In this Budget alone, Labor has $18 billion in new net zero spending. Labor’s net zero obsession is driving up inflation and destroying our economy. That’s why net zero must go. … The Coalition will also abolish Labor’s great big carbon tax – the so-called Safeguard Mechanism. This tax jacks up the price on essential building materials like steel, cement, and glass – driving up the cost of new homes. If the Liberal Party have a rebellious spine, or courage under fire, it’s been excised. Those brave souls who spoke out when the costs of saying something was high were often pushed sideways or right out of the party (like Craig Kelly and Gerard Rennick, or Barnaby Joyce and Pauline Hanson). If the Liberals hadn’t tossed out people like this, they wouldn’t have wasted half a year voting for Susan Ley as a leader in a doomed holding pattern. The party is a shadow of it’s former self. They did drop Net Zero targets last November, and promise to get rid of the Safeguard mechanism, but then they still thought the Paris Agreement was useful.

By Jo Nova So many great companies fell into the climate sink holeSuch was the cultural vibe that giant corporations all collectively jumped off a cliff together hoping to invent a new technology fast enough to be able to land. In the case of Honda, after 70 years of endless profits, they burnt at least $9 billion dollars, and have given up the idea of trying to get EVs to make up one fifth of their sales by 2030. The demand just isn’t there. They also thought they could shift their whole fleet to electric or fuel cells by 2030. That’s gone too. Honda posts first annual loss on $9 billion EV writedown, scraps EV sales goalsTOKYO, May 14 (Reuters) – Honda Motor (7267.T), opens new tab posted its first annual loss in nearly 70 years as a listed company on Thursday, hit by more than $9 billion in costs to restructure its electric-vehicle business, and the firm scrapped its long-term EV sales target.

Revealing its worst financial report since Honda listed on the stock market in 1957 underscores how risky an aggressive bet on EVs can be for a legacy automaker when it slams into weaker-than-expected demand.

Toshihiro Mibe, CEO of Japan’s second-largest automaker, on Thursday said Honda is scrapping its goal of having EVs make up a fifth of its new car sales in 2030 as well as a target of a full shift to electric or fuel-cell vehicle sales by 2040. And remember, the oil crisis is making things as good for EVs as it possibly can.

Image by Andrea Masciulli from Pixabay

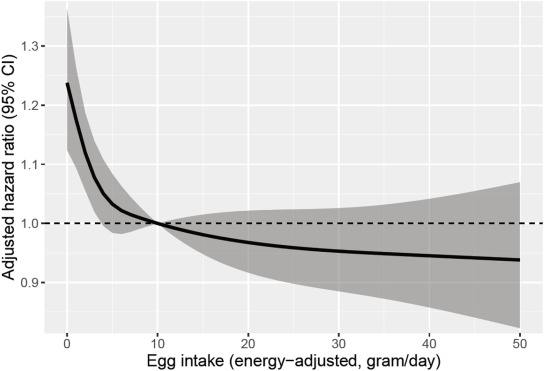

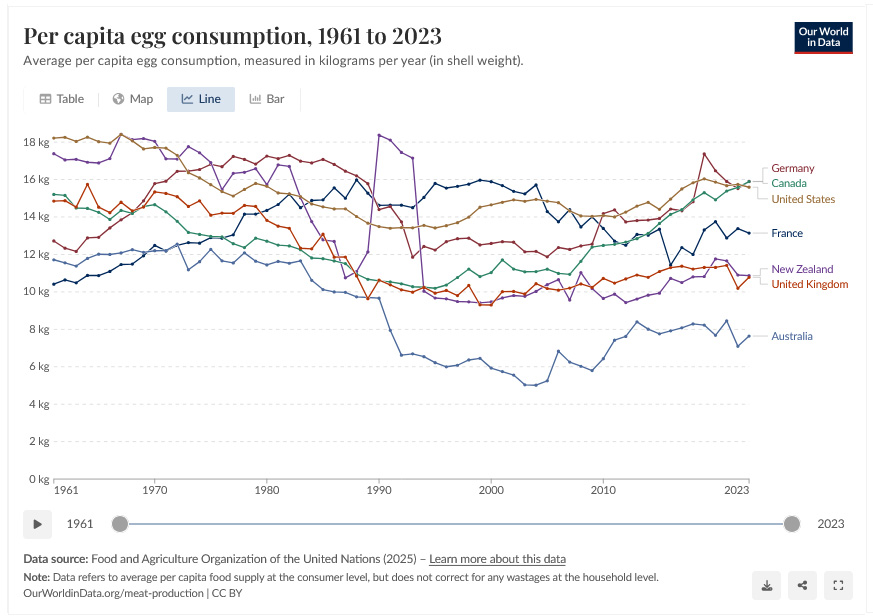

Photo by Biswarup Ganguly By Jo Nova The Experts said eggs were high fat foods with too much cholesterol, and egg consumption halved for twenty years in Australia, and still hasn’t recovered. Though in the last ten years egg consumption is increasing in places like the USA and Canada.  Egg consumption per capita in Australia and the UK. OWID Research Shows That Avoiding Eggs Entirely Linked To 22% Higher Risk Of Memory-Stealing Disease[StudyFinds]: But now researchers have tracked nearly 40,000 older adults for more than 15 years, and found that people who ate eggs regularly were far less likely to be diagnosed with Alzheimer’s disease than those who never or rarely touched them. The most frequent egg eaters, those having five or more servings a week, showed a 27% lower risk. In this graph below, it’s almost like eating less than 10 grams a day of egg is a deficiency….

The study was published in The Journal of Nutrition, and drew on data from the Adventist Health Study-2. The results show there is an association between eggs and dementia, but this sort of study can’t prove causation. It’s always possible that people who have some high risk of dementia for some reason choose not to eat eggs. But on the plus side, the results were dose dependent — the more eggs people ate, the lower their risk of Alzheimers, which is about as good as it gets in this kind of study. And they did exclude all the obvious factors, including vegans, and still found the strong link. There are reasons…Eggs are some of the richest sources for choline which is essential from brain and liver health. We know people taking cholinergics (e.g. some antihistamines) may be at higher risk of Alzheimers, and the condition is associated with a loss of cholinergic neurons and a drop in acetyl-choline levels. Two eggs provides almost as much choline as a 3 oz steak but cost a lot less. Eggs also have antioxidants like lutein and zeaxanthin, and they have some DHA fat that is more common in fish oil (especially if the eggs come from free range chickens.) The study was funded by the American Egg Board, so there’s a potential bias, but it begs the question — why wasn’t this study done in 1980 before the experts tossed eggs under the bus? Where was the government? For forty years people thought they were doing “the scientific thing” and were following the experts, but potentially thousands of people suffered with a form of dementia that might have been prevented? Alzheimers is the fifth leading cause of death in the USA but the leading cause of death in a more egg-deficient Australia and also Number 1 in the UK. Though of course, there are other contributors that differ from country to country, like sunlight, vitamin D, and recent medical experiments. Tens of thousands of people suffered. They missed out on the joy of eggs, and possibly got Alzheimers too. For no good reason I can think of, apart from being a rich nation with plenty of beef, Australia has some of the lowest egg eating habits.

Related:

REFERENCEJisoo Oh, Keiji Oda, Gabriela Chiriac, Gary E. Fraser, Rawiwan Sirirat, and Joan Sabaté, affiliated with the Center for Nutrition, Healthy Lifestyles, and Disease Prevention at the School of Public Health, Loma Linda University, and related departments at Loma Linda University in Loma Linda, California “Egg Intake and the Incidence of Alzheimer’s Disease in the Adventist Health Study-2 Cohort Linked with Medicare Data. . The Journal of Nutrition.” DOI: 10.1016/j.tjnut.2026.101541. OWID – Egg consumption Climate Change has become electoral poisonToo late, the socialists have realized they’ve lost the working class Not only did the British Labour Party get humiliated in the last few days, but ten thousand miles away, so did the Australia conservatives where they suffered a catastrophic 30% swing to One Nation. The unthinkable is happening. unelectable Climate Deniers are romping home politically, and the workers are voting “far-right”. Climate change and the core left-wing totems are not just failing to reach voters, they’re actively turning them away. It’s the same in the US where voters have already elected the antichrist of Climate Action (and three times already). It’s slowly dawning on the socialists that it is not a momentary blip. Things are getting so bad, the New York Times warned Democrats to “Forget climate change, and talk about something else.”. Hat tip to Climate Depot

The left took the working class for granted: Forget climate change. Democrats need to talk about other issues.Matthew T Huber, New York Times For the past several months, Democratic elites have been debating how much to talk about climate change, if at all — in part because these new candidates have narrowed their focus to energy affordability to win back the working class. But their plan to win back the working class won’t work — they picked the wrong topic, then stuck to it like glue, then left it too late to say “sorry” and they aren’t saying sorry anyway. They’re not even admitting they were wrong. “To be clear, this does not mean an abandonment of climate goals.” They say. Instead they make excuses about how good leaders will do things that reduce emissions anyhow, like offering free buses, or redesigning building codes, but they won’t call it “climate change”. Because, shh, we don’t want the voters to know what we are doing, or what we believe. We just want to win, right? Yay, democracy? In one survey 59% of voters were bothered that climate change had become political. That’s a huge slab of the population that doesn’t believe “climate science” is scientific anymore, it’s just political — 59%! The Pew Research Center routinely asks Americans to rank their top concerns, and climate change is consistently near the bottom. The Searchlight Institute found that 59 percent of voters in battleground states are “bothered that climate change has become such a political issue,” while only 42 percent are “motivated to do more and support policies to address climate change.” Rather than building a broad coalition necessary to enact something like a Green New Deal, climate change has become yet another issue fueling polarization. The core problem is that being in power is their only goal, which leaves them lost when when it fails (and when it succeeds too). The solutions they come up with are only about “how to fool the voters into voting for us” — not something constructive like finding out what the people want, or solving some problem, or changing stupid policies in the first place. The Democratic Party remains deeply unpopular. The way out is to stop elevating a litany of single-issue policies that appeal to the already converted. When it comes to climate change, for now, it might be better to say nothing at all. Their big plan failedThey thought the Green New Deal would win over the working voters. They didn’t know (and still don’t realize) that for every green job invented, expensive energy would destroy two to five real jobs. Obviously, the workers live the reality. Later, they must have decided that telling Democrats to “forget climate change” was too close to the truth, so they went back and edited the headline to hide that. Notice how the real meaning got obscured in the rewrite.

It’s what they do. They lie about everything.

By Jo Nova Turns out, when they have a choice, the Brits don’t want Net Zero or Mass ImmigrationThe English Council Elections won’t change the UK parliament, but they are the largest most significant poll of the mood of Great Britain. How bad is it? Half the headlines about the PM Kier Starmer are quoting him vowing that he won’t be quitting. It’s that bad. Labour have lost 1,406 seats, and the Tories have lost 557. Results are still being counted, but extraordinary things are happening. Nigel Farage’s Party — Reform UK — have stormed into more than 1,444 councilor seats in England (out of about 5,000), taken from Labour as well as the Tories. The Conservatives haven’t recovered. The Green wave didn’t happen. As Ross Clark said, “they were supposed to be ‘the insurgent party of the left’ and there was talk of them entering government as part of a left wing coalition”. Restore Britain, is new party launched by ex-Reform MP Rupert Lowe, and endorsed by Elon Musk. They are so new, they only stood in 10 seats, but won all of them. Where Reform UK wants to stop the boats and deport illegal migrants. Restore UK wants to reverse mass immigration. Wales, meanwhile, is having a full election for the Senned (which was called the Welsh Assembly). Wales is considered a die-hard Labour stronghold, yet after 27 years in government, Labour have lost, with the first Minister of Wales even losing her seat. The new force in Wales is Plaid Cymru, a centre left nationalist party, with Reform the second largest vote winner. Plaid Cymru want independence (eventually). Everyone wants to get rid of big bad governments, even on the left. Labour suffers historic Wales loss as Reform wins more than 1,000 English council seats and Greens make gainsBBC (Updating regularly) In England, Reform is the biggest winner, picking up more than 1,400 councillors so far. The Greens and Lib Dems also make gains, while the Conservatives lose almost 500 seats and Labour loses more than 1,300. Farage says he’d be very sad to see Kier Starmer go.” He’s our greatest asset”. According to SkyNews UK politics has been upended:

If these results were projected to the House of Commons in a Parliamentary election — Reform would win 284 seats, but still fall short of a majority (of the 326 seats that it needs to win outright.) But the Labour Party would be wiped out from 400 seats to 110 seats. The Tories would be left to negotiate to be part of a coalition. “I’ve seen parties lose a lot of seats… What I’ve never seen before is a party come from zero to 40%” “I’ve never seen such enormous rapid changes in vote-share.” And Australia has a one seat test of the same principle today. The People versus The Blob in Farrer. h/t Another Ian

By Jo Nova Means, motive, and opportunity. It looks for all the world like China used dirty tactics to corner the rare earth processing plants of the world. Michael Kern argues that for the last twenty years, every time a Western rare earth mining operation looked like it was about to build its own processing plant, Chinese producers would flood the market and crash the price of the metal. The investment case would evaporate and the company would go out of business. This kind of predatory capitalism is all very well until the nice guys realize what’s going on and ban your products from their defense contracts, back their own start ups, and those companies develop their own processing techniques, which is what is starting to occur in the US now. China was treating rare earths as a strategic weapon, while the West assumed the free market was free, and was hooked on the cheaper stuff. All’s fair in love and war, but dirty tricks have their own price. How China Killed Every Rare Earth Competitor Before It Could Get StartedBy Michael Kern, Oilprice The West handed its rare earth processing capability to China roughly 40 years ago. The last major U.S. rare earth mine, Mountain Pass in California, closed in 2002, unable to compete with Chinese production costs. By 2010, China controlled approximately 90-95% of global rare earth production and an even larger share of the processing and refining that turns raw material into usable metals and magnets. China was able to control the price because it not only controlled 90% of global rare earth production, but it also controls the Asian Metal Index (AMI). Even if the western company survived the price crash, they were often dependent on Chinese technology that only Chinese operators could maintain, and the CCP sometimes withdrew support leaving the western company with something they couldn’t use. The Crisis That Should Have Changed Everything The most dramatic chapter of this story came in 2010, when a territorial dispute between China and Japan over the Senkaku Islands triggered what many consider the first open weaponization of rare earth supply. In September 2010, China unofficially halted rare earth shipments to Japan. Within months, rare earth prices spiked dramatically, with the prices of some oxides increasing more than tenfold. The price of dysprosium oxide alone surged from roughly $90 per kilogram in early 2009 to over $2,300 per kilogram by mid-2011. What followed was a gold rush. Then China did what it usually does. After the initial panic subsided and prices peaked, China eased its restrictions and flooded the market with supply. Prices collapsed just as quickly as they had risen. Dysprosium oxide, which had peaked above $2,300, fell back below $200 per kilogram by 2016. One by one, the Western projects that had launched during the boom ran out of money, ran out of investors, or simply couldn’t compete. Molycorp, the company behind the Mountain Pass revival, filed for bankruptcy in 2015. Read it all. It’s a long article describing how things are changing. On January 1 next year, the US defense procurement rules will ban all Chinese origin rare earth materials. That means price-wars can’t shake out the US suppliers. The US government is also going to financially back key suppliers. One company called REalloy has developed its own processing pathway. It is expected to produce 525 tonnes per year of neodymium-praseodymium metal by early next year. Dirty tricks may help in the short term, but in the long run, it takes a long time to win back the trust.  ConocoPhillips Greater Esofisk area, Norway. By Jo Nova Look how fast Norway is movingWhile Australia and the UK pat themselves on the back, telling themselves that no one is interested in fossil fuels, the market price tells another story. Norway, meanwhile, is going gangbusters on project development. The EcoWorriers are not happy. These gas and oil fields were closed down in 1998 but there is still twenty years of gas and oil left to dig out. Production is due to start in 2028. The end of fossil fuels was always a myth The Blob wanted us to believe. Norwegian government attacked over decision to reopen North Sea gasfields— By Miranda Bryant and Jillian Ambrose, The Guardian Approval for exploration in 70 new areas prompts fierce backlash from fossil fuel opponents Amid sharp price rises in oil and gas since the US and Israel’s attack on Iran in February, Oslo has also given its approval for oil and gas companies to explore in 70 new locations in the North Sea, Barents Sea and Norwegian Sea. The decision by the Labour-run government goes against the advice of the country’s environment agency and has infuriated left-leaning parties. “We live in troubled times,” the prime minister, Jonas Gahr Støre, said as he announced the decision, which would “create great value for the community, lay the foundation for good jobs throughout the country, ensure our common welfare and contribute to Europe’s energy security and safety”. There are at least five different projects and areas that are suddenly in action: Norway’s state oil company, Equinor, hopes to develop the Rosebank oilfield, while Shell is waiting for a government decision on its Jackdaw gas project. This will of course, help rescue Europe from it’s green fantasy: Norway Just Switched on Another Gas Lifeline for Europe

Equinor has fast-tracked the long-idled Eirin gas field into production, boosting European supply via existing infrastructure at a time when energy security still dominates policy. That backdrop explains why Eirin, holding expected recoverable resources of about 27.6 million barrels of oil equivalent, mainly gas, suddenly carries strategic weight. Thanks to Ben Beattie who retweeted @yestiseye — “oh no, the Australia Institute is going to be so upset“

By Jo Nova It couldn’t happen to a nicer parasitic committeeThis is what happens when you treat your main benefactor like an idiot, and do everything possible to turn them into a vassal state of the Globalist Blob. In return for $800 million a year the UN spreads Chinese bioweapons, and throws giant junkets to reward The Blob loyalists, but nothing for the average American taxpayer. The US pays 22% of the regular UN budget, yet the UN has no respect for American voters or their choices. UN Running Out of Cash, Trump Unmovedby , Gateway Pundit Unless collections improve, Guterres warned, the UN will run out of cash by July 2026. Wide-scale non-payment by member states has accelerated the crisis. In 2025, 42 of 193 member states failed to pay their assessments in full, and by the February 8, 2026 due date, only 55 countries had paid. The United States is the dominant factor. Historically the UN’s largest contributor at 22 percent of the regular and peacekeeping budgets, roughly $820 million per year, the U.S. paid no dues at all in 2025 and accounts for approximately 95 percent of all unpaid contributions currently owed. The total U.S. debt stands at $2.2 billion to the regular operating budget… Not to put too fine a point on it, but the UN wants to control your medical data, decide which injections you must get, and dictate whether you can travel when the next pandemic comes, which it can declare any time it suits. The UN (plus Mark Carney) organized the bankers into a $130 trillion cartel to use pension funds against the average American (and western) voter. It dreams of putting a carbon tax on shipping so it can finally raise its own revenue (and be even less accountable than it already is). What looks act and smells like it’s working to become an unelected Global Government? And what’s the other word for that? — Tyranny. The United Nations is $1.57 billion in debt — quite an achievement for a group that has an annual budget of $3.5 billion. We know the EU and patsy countries like ours will keep funding the UN. But Donald Trump has turned off the tap to crime and corruption. Celebrate the win… POST NOTE: Lest anyone get the wrong idea, the other 78% of UN funding will no doubt continue, as the golden handshake promises of UN roles after politics tempt patsy leaders to chip in their nations wealth to help the poor suffering bureaucrats in Geneva. There is something to be said for electing billionaires who don’t want or need UN handouts. Our best hope is that stupid countries who are desperately in need of oil will be forced to pander to the US because it has what they want. h/t Willie Soon

|

||||

|

Copyright © 2026 JoNova - All Rights Reserved |

||||

Recent Comments