The Australian Bureau of Meteorology may not be meeting WMO, UK, US standards

Since the Australian BOM allows for one second “records”, it’s not clear it is even meeting guidelines recommended for amateurs.

The key question: How much of the warming trend in Australia is due to the switch in the mid 1990s from older slower thermometers to new electronic zippy ones that could record every waft of hot air? How many records today are just noise?

If the BOM would release its calibration and comparison data instead of deleting it, we might know. Why won’t they?

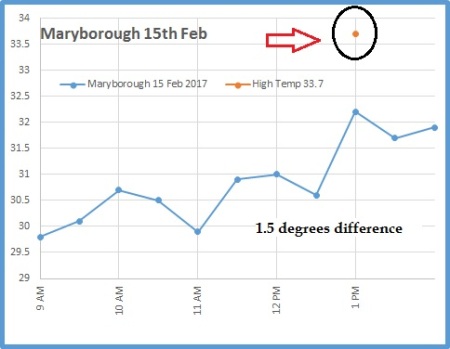

Here’s an example graph from Maryborough where the daily maximum was 1.5C above every thirty minute reading. Ouch — are we writing outliers and noise into our history books and climate data bases?

Add “sampling method” and averaging to your skeptical vocabulary. There will be a lot more discussion on these.

Maryborough. Graph by Ken Stewart.

Let’s consider some basic standards in the meteorology world

The Weather Observer’s Handbook 2012 tells us the new electronic sensors are more sensitive than the old mercury thermometers. The author, Stephen Burt, explains that the new electronic sensors can be too sensitive, and will record “minor random temperature fluctuations“. This means they will simply “generate slightly higher maximums and slightly lower minimums” than the older equipment did. Temperatures from new style thermometers must be averaged over longer periods to make them comparable with the older mercury thermometers, and also to “iron out minor stray electrical noise or sensor logger resolution artefacts.” h/t Chris Gillham.

The WMO, the UK Met office and the US all use longer average samples than Australia

The WMO recommend a one minute mean temperature standard be used, which is what the UK uses. In the US though, get this, meteorologists use a five minute average, and further below, Lin and Hubbard suggest it should be 7 minutes not five.

Further complexifying things — I hear the UK may put electronic sensors outside the Stevenson screens, which would make a big difference. Keep that in mind. UPDATED: Now I’m hearing, not so, it was just one photo.

Records, trends, daily ranges — they can all change with the sampling method

Was that really a record? Here’s a case where longer sampling times meant a record was not set on one day in Dodge city:

A good example is accorded by the maximum temperature recorded at Dodge City, Kansas during the heatwave which affected the southern and eastern states of America in summer 2011. Dodge City has one of the longest continuous temperature records in the United States, commencing in 1875. The hottest day on its long record stood at 110F (43.3C). On 26 June 2011 the highest 1 minute temperature observed was 111F (43.9C). However the value logged on an ASOS system was not accepted as a new record because ASOS take the maximum temperature as the highest 5 minute running mean, which was 110F. Thus the official high by the US method was 110F, tying rather than exceeding the previous record: by the WMO recommended method the maximum was 111F which would have set a new record.”

Here in Australia, with one second averaging, based on past behaviour, the new “record” could have been even higher, like 113F (45C) — who knows — which might have been measured for just one whole second. That kind of difference, which appears to be momentarily far above most of the data around it, has been entered into our official climate data online.

The Australian Bureau of Meteorology (BOM) responds with “Fast Facts”

In reply, the Bureau of Meteorology just published FAST FACTS: How does the Bureau measure temperature? Jennifer Marohasy has already responded on her blog that the Bureau are rewriting the rules (read it all).

Lets compare the Australian method to the US. In the US, researchers publish long papers on, say, the bias that occurs with two different ways of doing 5-minute averaging (more on that extraordinary detail below). The WMO only recommends a one minute average. Prepare yourself for the Australian BOM’s answer about why they don’t even do that (get ready to be surprised). Engineers everywhere may be crying…

The guide recommends that temperatures be integrated over time to smooth out rapid fluctuations. There is more than one method of achieving this. The WMO guidelines do not prescribe which method to take. In its automatic weather stations the Bureau achieves this by using platinum resistance thermometers. These are comparable to mercury in glass thermometers.

Say what? “Comparable” to mercury in glass? That last sentence goes against everything in the WMO guidelines and the handbook. It blithely writes off peer reviewed papers, not to mention engineering-common-sense. Apparently the BOM are claiming a bucket defacto-averaging kinda-in-the-system. Because their electronic sensors are inside the Stevenson screen, there is a sort of averaging — a lag, or a low pass filter — but probably one that changes minute-by-minute with the wind-speed. They also claim that the response-time of the sensor is as long or longer than the changes in the temperature of the air. (Color me unconvinced, where is that data?)

As Jen Marohasy points out, this is far from a declaration that the electronic sensors are averaging temperatures in the same way that the old slow liquid-in-glass ones do. (Did I mention the data….?)

The BOM:

This means that each one second temperature value is not an instantaneous measurement of the air temperature but an average of the previous 40 to 80 seconds. This process is comparable to the observation process of an observer using a “mercury-in-glass” thermometer.

Spot the weasel-word “comparable” (in both paragraphs). Comparable is not equivalent. One and ten are comparable, but they are not equivalent. (Just like one second and sixty seconds, Hmm?) When Ken Stewart looked in detail at the Australian data he found a lot of volatility — in the most extreme case, temperatures fell by almost 3 degrees C in under one minute. In 44 of 100 cases, temperatures changed by more than 0.3C in the following minute. That doesn’t smell like “smoothing” or averaging. Think about what kind of overarching volatility it would take to create a smoothed average that still had that kind of instability.

The bottom line: What we have is 20 years of incomplete raw data that includes random noise recorded from instruments that are nothing like the ones used for about eight of the last ten decades in Australian climate history. Somehow we are supposed to connect that data together?

The Bureau has a budget of $365 million a year, yet it’s looking like they have thrown away the raw detailed data for the last twenty years that might have been useful. What we are left with is — we have to ask — possibly not salvageable?

Where to from here?

The first thing the BOM needs to do is store all the b—– data. Second, it needs to make that public. Then we need to talk about changing the BOM sampling process and getting some averaging done properly. Then we need to set up a search and rescue team to go back through the last twenty years of electronic data and see what they can do. At this point, it would take a group of PhDs years to set up replica stations in many different conditions to gather enough data to be able to kinda “back predict” what the electronic data should have shown had it used a proper averaging method.

A one second wafting hot spike,

Gives a false average temperature hike,

Which for sensors is crude,

And with past records skewed,

Are the methods the B.O.M. like.

–Ruairi

________________

The Nitty Gritty: How temperatures are supposed to be measured

Now we get into some more detail about how different the standards are overseas. We get a better idea of things that muck up the data, so we can all start to appreciate how tricky this is — and perhaps what better management and processes might look like.

Even the US five minute averages are not necessarily good enough

A paper by Lin and Hubbard discusses in extraordinary detail how a systematic bias occur. They have complex graphs showing how different sensors respond to changes in air temperatures at different temperatures, and with different wind speeds. (TX, means maximum temp. TN means minimum.)

Liquid in glass thermometers are fundamentally different from electronic (platinum resistance) thermometers. They are not equivalent:

Although the LIG thermometers in the CRS also is an instantaneous observation for TX and TN the relatively large LIG thermometer’s time constant plays an exclusive role in filtering out high frequency temperatures and exponentially weighted smoothing temperature signals.

The bias (error) can be as large as 0.2°C (and they are talking about 5 min averages):

For the ASOS observations, the ASOS does have a 5- min running average for daily TX and TN, however, the even smaller sensor’s time constant in the ASOS makes its biases systematically significant (0.05–0.2 °C warmer on average TX or cooler on average TN).

Hence they recommend 7-minute averages:

.. “Commonly-used 5 min average was not sufficient for the fast-response thermometers in surface climate networks while the WMO standard thermometer (20 s time constant) should have a 7-min running average for reporting daily maximum and minimum temperatures. The surface temperature sensors with smaller time constant than the standard LIG thermometers must implement a follow-up running average algorithm.”

Calibration alone is not enough to remove this effect:

It should be noted that most of surface temperature calibrations, even highly accurate calibrations, are unable to remove out this type of out-of-phase uncertainties in the TX and TN because the instrument calibrations for climate networks usually are of static calibrations rather than a dynamic calibration process for the electronic surface temperature sensors and the LIG maximum and minimum thermometers…

Is that warm bias bigger than the cool bias?

From the same paper: In the field the maximum temperatures (fig 8) are affected more than the minima (figure 9). Note that they are comparing two different ways to average 5 minute readings, and they still find a difference. They are not looking at the change from one-second to five minutes. That is something entirely different.

Daytime air turbulence intensity usually is stronger than that during nighttime, thus, it is understandable that the TX differences shown in Figure 8 were larger than the TN differences in Figure 9.

…

Thermometers circa 1912

The whole point of averaging is so we can compare modern temperatures with ones recorded a hundred years ago. So check out Thermometric Lag (1912) which looks at the response time in different media including air at different wind speeds. The chart comes from a study published by the American Meteorological Society. Basically, if the wind is very still (slow in cm/sec) it takes a lot longer for a thermometer to respond to a change in temperature outside the screen. A thermometer in air may take over 3 minutes to respond on a still day. When wind speed is very fast, the change is more rapid.

THE BACKGROUND LIST — Scandal after scandal

- BOM Scandal: One second records in Australia — how “noise” creates history and a warming trend

- Another BOM scandal: Australian climate data is being destroyed as routine practice

- BOM scandal: “smart cards” filter out coldest temperatures. Full audit needed ASAP!

- BOM scandal heats up: Kininmonth, Watts, Nova quoted in The Australian “We audit banks, why not BOM?”

- Scandal: Australian Bureau of Meteorology caught erasing cold temperatures

- On Sunday, Goulburn got colder than the BOM thought was possible (and a raw data record was “adjusted”).

- Two-thirds of Australias warming due to “adjustments” — according to 84 historic stations

- The mysterious BOM disinterest in hot historic Australian Stevenson screen temperatures

- The lost climate knowledge of Deacon 1952: hot dry summers from 1880-1910

- 1953 Headline: Melbourne’s weather is changing! Summers getting colder and wetter

- The mysterious lost hot Sunday in Bourke, did it really happen?

- Wow, look at those BOM adjustments – trends up by two degrees C!

- Australian BOM “neutral” adjustments increase minima trends up 50%

- Was the Hottest Day Ever in Australia not in a desert, but in far south Albany?!

- Hottest summer record in Australia? Not so, says UAH satellite data

- Mystery black-box method used to make *all new* Australian “hottest” ever records

- BOMs new data set, ACORN, so bad it should be withdrawn (954 min temps larger than the max!)

- Threat of ANAO Audit means Australia’s BOM throws out temperature set, starts again, gets same results

- Australian Temperatures in cities adjusted up by 70%!?

All kinds of other oddities of Australian temperature records were discussed in my chapter “Mysterious Revisions to Australia’s Long Hot History” in the new book Climate Change: The Facts 2017. Co-authors include Clive James, Matt Ridley, Willie Soon, Roy Spencer, and Anthony Watts. Get your copy now, the first edition sold out. Also available as Ebook on Amazon.

All kinds of other oddities of Australian temperature records were discussed in my chapter “Mysterious Revisions to Australia’s Long Hot History” in the new book Climate Change: The Facts 2017. Co-authors include Clive James, Matt Ridley, Willie Soon, Roy Spencer, and Anthony Watts. Get your copy now, the first edition sold out. Also available as Ebook on Amazon.

REFERENCES

Lin & Hubbard http://onlinelibrary.wiley.com/doi/10.1002/joc.1536/pdf Int. J. Climatol. 28: 283–294 (2008)

If BOM won’t publish the data, the algorithms or anything else they hold secret then it has to be assumed this is because BOM have no confidence that any of this would survive public scrutiny.

If BOM believe what they are doing is scientifically sound then they must ‘Publish and be Damned’.

But the ‘Damned’ bit is probably, in their eyes, the root of the problem .

524

Excellent comment Roger. In the scientific world, which BOM claims its place, publication enhances the scientist’s recognition and stature, including pay scale.

Therefore, to adamantly avoid publication and discard public data, in and of itself, suggests that BOM is not telling us all they know. Moreover, the data they collect is public property and belongs to the citizens.

324

In the end its about the money and how to get more. Quality is a useful thing if it can get you a bigger budget. Australia is guv bloated. Its a terminal disease.

181

I think its only fair to ask for the raw data to be published, after all, what do they have to hide?

I remember once asking for some de-personalized stats from another govt dept, and they treated me like a misbehaved school boy for daring ask for such things, that implied one of their sacred cows not be as advertised….I was sent into a bureacratic red tape trap, so I gave up. But, I did get the stats I wanted from another state in the end and it seems to explain *exactly* why the first mob where so protective.

What I conculded from that is that its not just the BOM, its anything that is required to protect carefully crafted govt social engineering.

An ignorant population is a complaint population.

172

…”compliant population..”

31

True! Complaints are more likely to come from an informed population.

30

“I think its only fair to ask for the raw data to be published,…”

If Jen’s blog post is accurate, then the “raw” data will be the maximum and minimum values over the previous time period, or perhaps the average of these two. This would show a significantly different picture to a trailing or “running” average weighted to match LIG responses.

However, BoM will no doubt suggest that they are abiding by WMO standards, as WMO specifies an average over the period (which they are doing), but not how that average should be calculated, or even that the averaging process should be selected to approximate LIG – they IMPLY it should, but don’t explicitly say it should.

I would strongly suggest that the best approach here is not to accuse them of not meeting WMO standards, because they can defend that they technically are following the standard. Rather, we should be asking them to defend the averaging method they have chosen – if they had a choice to make on exactly how to meet the rules (which averaging method to use), then they should at the very least have a discussion paper or meeting minutes on how and why they chose the method they did. If not, why not? If not, who decided on this method and based on what criteria? Can BoM cite peer reviewed papers in support of the method they chose? If not, why not? Did BoM perform exploratory research on the effects of various averaging methods prior to deciding on the method they used? If not, why not?

101

What was it Mencken said? “The whole aim of practical politics, is to keep the population scared, and clamorous to be led to safety, by menacing it with an endless series of hobgoblins, all of them imaginary.” Global warming/climate change/ocean acidification are only the latest in a series of hobgoblins that included Alar in apples, DDT, Y2K,and the hole in the ozone layer, the last being a naturally occurring phenomenon which occurs for 3 months every year as the polar vortex breaks down at the end of the Antarctic winter. The greens have mindlessly embraced each and every cause as a threat to Gaia the earth mother…..and in each case someone has made a financial killing while real businesses shrivelled and died .

41

I think we are now in the “complaint” category, thanks to Jo and Jennifer,as we are no longer ignorant.

20

Not quite the raw data as its only stations with a long trend but it does suggest something not consistent with 97% consensus.

https://realclimatescience.com/2017/09/plummeting-temperatures-in-australia/

10

Roger- I am in complete agreement.

No full methodology, no complete datasets means no accurate/reliable/reproducible results.

Any reputable scientist knows this.

But who says climate “scientists” are reputable(other than Flannery/Scientifically ignorant celebrities and politicians?)

194

Because of the BOM’s failure, climate “scientists” now have someone to blame. The excuse will be “I used the BOM’s data.

223

Well if that’s what it takes CC let them get on with their recantations.

The evidence supports the Null hypothesis. They’ve never had a better chance to climb down and save their credibility.

31

In my book, a reading of any instrument is noise until proven otherwise. In most cases, proof is very difficult to produce. Especially if you are trying to prove your theory about what you are measuring is true.

It is almost always best to assume you are lying to yourself. Check both your visible and hidden assumptions and your premises. Check again. Oh, and check one more time. Even then, expect to be surprised by what you find. It is more than likely you have missed something very important that will strongly affect the end result. There is almost always a simple truth to be discovered that will blow a huge hole in your most cherished wishes.

The bottom line is reality is what it is and not necessarily what you want it to be. Reality’s responsibility is only to be what it is. It is your responsibility for discovering what it is and acting accordingly. The quality and quantity of your life depends upon it.

233

Richard P. Feynman

241

“If you want to keep a secret, you must also hide it from yourself.”

― George Orwell, 1984

111

If this was happening in private industry the entire department would be fired. Why do we not hold government to the same standards of accountability as in private industry?? SMH

233

Because it is the government and, according to the government, whatever the government decides to do is the right thing to do. Unfortunately, the government’s standard of right is anything that expands the reach, power, and intrusiveness of government into the lives of the governed. Sadly, this is the only thing that governments are good at doing.

Government is very much like a lit match being tossed into a dry grass outback. It starts small and soon grows to being able to create its own fire storm that consumes all in its path. It doesn’t stop until there is nothing more to consume.

152

No, it is because there is no valid global climate science and no competent climate scientists. This morning I watched a portion of a public television program on new congressional (USA) attempts to come to a bipartisan approach to saving Obamacare. Absolutely none of them shows the slightest understanding that their efforts are in vain, because their basic premise — that Obamacare should be saved — is wildly, obscenely false, as millions of Americans in that system could tell them. Neither the people nor the government have the money to make it work; yet, as in the climate “debates”, their answer is to double down on forcing everyone into a losing system.

The Australian BOM is merely doubling down on the incompetent science. This should have been absolutely obvious to everyone in 2015, when the scientists, after generations of “global temperature measurements”, changed their program in order to argue that there was no 20-year-and-counting global warming “pause” (so that, in turn, they could double down on their incompetent “science” of the “greenhouse effect”, which the very best, in-situ evidence — that means evidence in the atmosphere itself, not in somebody’s laboratory vessel — does not exist, and can’t exist, due to the global stability of the atmosphere and the most basic, honest physics).

The bottom line isn’t even scientific incompetence, however. It is that we are in a war — in the early years of it, when most of us are not yet carrying guns, and using them — and reason was the first casualty in that war. We are seeing the world-encompassing consequences of too many, too-long-nurtured false dogmas, the worst of them now demanding to be king of the hill, against all reason.

297

Issues are a bit more complicated that they seem. I found that under fixed conditions, say as they are when calibrated in an oil or water bath or funnel of melting ice (zero degC); different PRT probes (and thermometers) can be dead accurate (exactly the same); however they can be quite different in service.

Encased in different materials of different diameter; response-time can be very fast or very slow, depending on the insulation (rate of heat conduction) by the material and its mass. So a probe bought from Bunnings or on Ebay, may not behave the same as one from Jacar or one manufactured to Bureau specifications. (Similarly for thermometers.)

It’s a tricky issue because slow conductivity could miss a peak that is real; while very high conductivity could invent a peak that doesn’t exist (someone walking past is not the weather). Call it the Number 1 conundrum in science.

The second issue is, that most of the Bureau’s ACORN-SAT sites (except perhaps three (amongst them Gunnedah and Moruya Heads PS)), use small Stevenson screens; which in most cases were introduced at the same time AWS became primary instruments; or when AWS were last up-graded. Also, replacing a screen or AWS often involves a site move; sometimes just over there; sometimes much further away (500 m or more). All these factors come together making it hard to work out categorically if it’s the AWS, the screen or the relocation.

Even more confusing is that at some sites all these things can happen and there is no impact on the data-stream. At others its an unsuspected event: at Giles in the middle of nowhere the Warakurna road just 25 m west of the screen was sealed in 2012 (you can see it on Google Earth); at Launceston, they moved the screen beside a vertical wind profiler array on a gravel or paved bed; also at Canberra and Adelaide airports, and did I mention Port Hedland or Ceduna? At Cape Leeuwin they shifted the AWS to an updraft zone on the edge of the cliff, at Low Head (Tas), it’s almost in the sea.

To create “record” and “unprecedented” heat consistent with global warming theory, they moved the sensitive instrument in its small screen at LaTrobe uni in Melbourne from a grassy woodland situation to within a few meters of a parking lot; ploughed around the site at Bourke, Dalby and Cunderdin; not to mention Badgerys Creek and Penrith Lakes; paved Woomera with bluestone gravel; and sprayed-out the grass at Amberley, Richmond RAAF, Tennant Creek and other places. At Mardie (WA), Oodnadatta and St George (Qld) the screen relocated to a dusty paddock; at Rabbit Flat and Marree it’s beside a dusty track. At Mount Joyce (Mt. Victoria) the AWS sites on a cliff above the railway service road and on the eastern side is the Great Western Highway; at Thargomindah post office it’s down the back on the corner of Beech and Elm streets which when recently widened and re-sealed caused maximum temperature to step-up.

Having examined hundreds of datasets; checked out each site using Google Earth (pro) time-lapse satellite images; historical photographs, maps, stuff in museums and archives; I’m firmly of the view that aided and abetted by politicians, professors and the CSIRO; the Bureau is engaged in the greatest fraud in the history of Australian science; meantime AGL is laughing all the way to the bank!

Cheers,

Dr. Bill Johnston.

12

I specified the logical mechanism behind government growth and you specified the working details. We are saying the same thing from a different perspective.

10

“Government of the people, by the people, for the people, shall not perish from the Earth.” – Abraham Lincoln

Constituents in Australia seem to be the nuisances that send emails politicians do not want to bother with and the voters politicians always want to meet during election campaigns.

71

Mostly right, Lionel. The other side of the coin is the very powerful desire for the managers, and this especially includes the supervising politicians—The Ministers—to cover their tender nether regions: “Not on my watch!”; pure cowardice, which shows a bad lack of imagination.

Every now and then one encounters a politician or a manager who is bold enough to take one of these bulls by its horns and `fix it,’ whatever the `it’ is which needs fixing. Usually, it’s because that individual actually understands the problem. Problems they don’t or can’t understand are not `fixed,’ but papered over and left for their successor. It seems to be a malaise which particularly affects any highly politicized organisations, so the Civil Service and its Ministers, while a specific case, are not a special case.

To misquote E, H. Lee:

`There are old managers and there are bold managers but there are no old bold managers.’

Protecting one’s Super Annuation Scheme becomes a top priority.

91

Until I read this, I thought sensors, in particular thermometers, recorded data at a fixed period (eg; 1 second), and this data was resampled after being recorded. I also thought that any reputable organisation would archive all raw data appropriately, so it could be referred to again later.

However, I am not surprised that sensors apply filters before sending the data to a recorder. I’ve worked a few sensors which can output their data in multiple formats, and, when comparing these outputs, it was obvious that one was filtered (smoothed), and the other was not (or was not filtered the same way). This was not mentioned on any of the official documentation about the sensor, and the manufacturer had to be contacted directly, so they could explain the filtering process.

As for data spikes, how hard is it to process the data and remove points which lie outside a 3x standard deviation (for example) over a fixed-width sliding time window?

Ultimately, if the core data recording and processing methods are not standardised over the entire sensor network, and are not fully documented, they might as well use the randbetween(bottom,top) Excel function, combined with the data forecasting tool, or a specialised dart-board, and a trained monkey.

223

I agree that it easy to digitally filter the raw data to provide any desired time constant but a simple, old fashioned, method would be to mount the sensors on a heat sink with sufficient thermal mass to mimic the thermal response of the old ‘mercury in glass’ thermometers.

80

With previous experience trying to measure rapidly changing and peak temperatures with LIG and Pt resistance meters I have some doubts about the chances of getting exact correlation. We had records of resin exotherms ranging from peak temperatures of 130-200℃ depending on the resin formulation and the time to get to the peak. Pt resistance meters gave higher peak temperatures and slightly shorter times to peak, when compared directly with the same resin.

Trying to use historical readings from old laboratory books as a guide to formulating a match was an art form rather than a science. In fact it was quicker to start formulating from scratch.

91

I’d vote for the “or a specialised dart-board, and a trained monkey” only trouble is BoM would train the monkey and pick the dart board! 🙁

142

There are standards in climate science?

192

yes, but like everything else in the majority of climate science, they are changed to fit the desired outcome (allegedly).

171

Yes… LOW standards!

133

Elastic standards?

50

broken elastic.!

13

Standards require enforcement, the only enforcement I see is adherence to the narrative.

110

Yes, there are minimum global warming standards that must be met for any climate science article if it is to pass peer review.

40

Jo asks,

First I have to admit that I’m a novice at this temperature measuring business. But I’m not stupid and I know far too much to swallow all this temperature madness. What I see from where I sit is a bunch of number crunching that leads to… …and I don’t know exactly what? And I’m hard pressed to see a benefit in it.

A temperature measuring device has a certain uncertainty, a likely error in the measurement. So you note that and you go on. Does averaging add any new information to what you already have? If it does I’m hard put to think of what it is. Running average, sliding average, this, that and the other average, I’m overrun with averages that add zero new information.

A useful temperature record is still a matter of just 4 things. Location, time, date and the thermometer reading. How is anything more useful than that even possible?

A trend is tied to a location as far as I can see. Earth’s average temperature is just tits on a bull. At Alice Springs there may be a warming trend and at Denver there may be a cooling trend. But what is the planet doing? Frankly I find more useful information about that in archaeological and historical data. Yes the evidence is strong that they once grew food crops in Greenland, something you cannot do now. By golly, Greenland got colder. End of story. But did that happen to the rest of the world? Who can say?

All I see coming out of this, whether it’s your BOM or our NOAA, is ammunition for people we shouldn’t give the time of day, much less place importance on what they say, to use against us politically.

Science has failed us. Or we have failed science, I don’t know which. But all this is about as useful to the guy living on side street Australia or side street USA as a case of the plague. I don’t want the money wasted anymore on keeping, no sometimes generating statistics out of thin air. How do we shut these people off? And we need to because if we don’t they’ll drown us in useless numbers at our expense.

And this thread among others is good evidence that we’re already drowning in useless numbers.

If someone wants to do all the crunching let them do it on their own time and their own dime and never, never let them influence government policy.

And now I’ll get told why I’m wrong and the red thumbs will start. But I don’t care. Arguments over even 10s of degrees of temperature much less 10ths of a degree don’t reach me because I know I can’t do anything about it. And I know I’m not causing it by driving a car or running the lights late at night, Al Gore’s latest “Oscar winner” notwithstanding.

213

One could view science like politics, law, economics and the Fourth Estate as observational, reasoning, reporting, ordering and counting tools, tools that enable analysis and help create a semblance of bottom up order in chaos. The corruption imposed by top down ideology aided by middle management laziness and a wider absence of integrity appears to have successfully twisted these indispensable tools into weapons against reason and in support of a wanton societal destruction. Inevitably, the tools must number among the casualties. After all, a tool is only ever as useful as the individual that wields it.

As Harry Dale Huffman at 4.1.1 writes:

Precisely. And yet, we all know the aphorism, ‘bad workmen blame their tools‘. Yet we hear, NOT A WHISPER.

It seems extraordinarily revealing, betraying even, that today there is no whisper. Blaming a person or thing is politically incorrect, culturally passé. Instead, blame lingers and hides in ‘systems’, everywhere and no where, a toxic infection of cultural nihilism best expressed and promoted by the eco-Marxist Left in

officialtotalitarian enculturation by ‘government’, ‘academia’ and ‘science’.A key way to fight back in this war is to go after the individual, by name. One will never ventilate or purge the The Australian Bureau of Meteorology of this infection until one is prepared to name the names, the building and the very number on the door of their office.

112

Attempting to shape the news?

https://www.cnsnews.com/news/article/melanie-arter/wh-homeland-security-adviser-asked-blame-hurricanes-climate-change

‘(CNSNews.com) – White House Homeland Security Adviser Tom Bossert said Monday that it was beyond the scope of his abilities to determine what caused Hurricanes Harvey and Irma, which made landfall in the United States in the past two weeks, and Hurricane Jose following behind in the Caribbean.

CNN’s Jim Acosta asked Bossert to weigh in on whether the Trump administration should look at the link between climate change and the two most recent storms to hit the United States.

“The previous administration saw a connection between climate change and homeland security and that the frequency and intensity of powerful storms like Harvey and Irma could pose a problem for future administrations. You could have FEMA budgets that can’t keep up with the demand when you have powerful storms hitting the country,” Acosta said.

“Is that something that you think this administration should take a look at? We know that the president pulled out of the Paris climate accord. Are these storms giving this administration some pause when it comes to the issue of climate change and homeland security?” he asked.

“I was here in the 2004 cycle of hurricanes four and six weeks that hit Florida. I think what’s prudent for us right now is to make sure that those response capabilities are there. Causality is something outside of my ability to analyze right now,” Bossert responded.”

40

I’ve begun to realize something, perhaps too late but I finally got the message. Interesting, is it not, that science has nothing in it that even pretends to say to those using or pretending to use it, that you must remain honest or that you cannot lie or cheat your way into the science hall of fame. There’s nothing in science that says you may not hijack the scientific method to further your own personal goals or those of a particular ideology. So we tend to trust science. After all, look at the huge body of knowledge gained, sometimes at great personal risk as in the case of Galileo Galilei. Now the science train is off the tracks yet it’s still barreling forward at high speed down what must ultimately be a line that ends at the edge of a cliff.

It’s the same with many things. We institute governments because we know we must have certain things individuals can’t manage by themselves: a common defense, a system of courts, a system for electing those who will hopefully be running all the arms of government for our benefit. In the U.S. the whole thing was based on government of the people, by the people and FOR the people. Yet that was the very first thing that went wrong when we decided, against George Washington’s very sound advice, and we formed the very first political parties. So from the start it went from, of the people, by the people and for the people, to of the people by the party and for the party.

And if you look at the very concept of government there was never even a thought given to whether it had anything about it that said it must run properly for the benefit of the governed. Looks just like science doesn’t it? Look at all the good to come of having governments: we can lock up murderers, thieves and the like when we catch them and even in some places we execute murderers with a clean conscience because laws tell us that’s what to do in certain cases; we can build very useful interstate highway systems; social services; so many things. Yet Donald Trump, the most unlikely candidate in the world, got elected in spite of himself because too many people finally noticed too many things gone wrong. And it started when the United States Constitution was first ratified by those original 13 states.

Now marry the 2 and we have this insufferable jackass named Al Gore making yet another movie with which to win another Oscar and then try to beat the world over the head with it to make us believe, make him richer than he already is and our governments more powerful than they already are.

And it’s our fault, you and me and every other citizen of this world for not keeping close watch over what was happening. We were and are the last defense against abuses and where have we been? So many millennials know the name of their favorite sports star but don’t know who represents them in congress. And from recent TV interviews by Jesse Watters (Watters’ World) tell an even worse story.

50

I think the word you are looking for, is “arcane”. As far as I am aware, there are no internationally agreed standards for what measuring equipment is used, how it is calibrated and against what standard, where the it is sited, how it is positioned, how the readings are taken, who takes the readings, when they are taken (time is a critical variant), … and so on. You get the drift. I think the best we have managed, internationally, is some loosely worded “recommendations” regarding “best practice”.

If you are doing some experiment in a laboratory, you will go out of your way to ensure that there is nothing that can interfere with the accuracy of measurement, and validity, and the rigour, and repeatability, of the methodology used in the experiment. You need to document everything and anything that might interfere with the observed results, at the time and place, where they were recorded, and how you have addressed those issues.

None of this applies to meteorology, in the wild. I have heard it said that, “The nice thing about meteorological standards, and practice, is that there are so many to choose from”.

If some people are rorting the system for political or financial gain, how could we ever prove it?

70

Arcane, as in unknowable by anyone not already in the know. Or so it appears.

I spent 47 years designing and implementing software and if it’s any comfort, that too is an arcane art. There are a lot of published books on how to do it but no two agree on very much. And no two programmers do it the same way either. Give me and someone else equally qualified the same assignment, same specs, every detail about the required product the same and you’ll come up with two programs bearing about as much resemblance to each other as an aardvark has to a zebra.

And the world now depends utterly on software, these days even your safety, since if you fly, the modern jet liner on average has a captain who could not pull off the landing in the Hudson river that Sullenberger did. They rely on the flight management computer.

30

And now they talk about having software design software. God help us.

30

Lionell Griffith should have an interesting comment or two on the subject. We shall see.

10

“They rely on the flight management computer.”

There is a significant difference between software development and testing for a PC, tablet or phone and for an embedded device – most especially “mission critical” devices, where human life is at stake. A typical flight management computer on a commercial aircraft that is *required* to fly the aircraft is not a single computer, but typically 3. All from different hardware suppliers and all running software from different suppliers. The software (usually “firmware”) has a limited number of input and output combinations and can be proven correct. The hardware has watchdog timers etc. It’s very safe – certainly at least as safe as any other “required” hardware like hydraulics. That is, things can still break, but there are redundant, independent backups and things fail “safe” as much as can be.

A PC or even a phone is a significantly different environment with generally far less severe consequences for failure and a far higher focus on flexibility, not reliability. The entire design, construction, commissioning and maintenance approach is completely different.

Just have a look at the hardware or software requirements for, say, a pacemaker. Each and every component of both is tested and tracked at a level that would amaze you if you haven’t seen it before – and that is “mission critical” at the individual level, not the group level like a commercial airliner.

20

I agree with you. And yet if you suddenly confront that flight management computer with only 3,000 feet of altitude and both engines dead it will not know what to do. And while some captains will manage to pull it off, those who’re lost without that computer will be dead within the next few minutes.

40

Well, they won’t make that mistake, again … 😉

40

The trouble is that when they make that mistake — their one and only chance to make it — a hundred or two paying passengers also make the same mistake — involuntarily.

It’s funny in the peculiar sense that we are still losing airplanes, even with all that technology.

Fortunately, with all the hundreds of airplanes in the air at any given time, we don’t lose very many and most pilots fly their whole career without significant incident and retire from flying still in one piece.

10

Sorry Roy. My comment appears more callous than I intended. I should have explained the background.

All trainee pilots in The Royal Air force, flying their first solo in a jet, are told, “Don’t crash the Aircraft”. On the rare occasion when a Trainee asks, “What will happen, if I do?” The Instructor says: “Well, you won’t make that mistake again, will you?”.

10

RW,

I did realize you were not very serious with that comment but I’m still serious about the consequences of too much reliance on the computer. But let’s agree that we agree about not crashing the airplane.

I don’t remember what my instructor said when he grabbed my log and signed off on that first solo but once in the air it was a very strange feeling when I looked at that empty seat beside me and realized that I was it, there was no one but me to blame or praise about the outcome.

After so many years as a programmer I have an innate distrust of software. You don’t put your life in the hands of a programmer. I’m probably overboard but the record so far is not really convincing.

You’re a sharp guy. Do you trust a programmer? Remember, hundreds of thousands of lines of code all have to be not only error free but have to anticipate everything — a very tall hurdle to get over.

That’s not really a fair question to ask so you don’t have to answer if you don’t want to. We all have no choice but to trust that programer because that’s the way things are going. 🙂

10

Let me see if I can provide a short list for you on some of rorters and some evidence that shows they’re rorting the system:

Tim Flannery

https://australianclimatemadness.com/2012/03/08/flannery-out-of-his-depth-as-flooding-rains-return/

Andy Vesey

https://myrenewables.wordpress.com/2017/08/10/agl-says-solar-wind-storage-cheapest-way-to-replace-coal/

Alan Finkel

https://cleantechnica.com/2017/07/11/investors-prefer-wind-solar-theyre-cheaper-coal/

Al Gore

https://youtu.be/vFK-UTGH1Zw

John Cook

http://daviddfriedman.blogspot.com.au/2014/02/a-climate-falsehood-you-can-check-for.html

The Australian broadcasting Corporation

http://www.abc.net.au/news/2017-09-11/gas-not-coal-the-fix-to-australias-soaring-electricity-prices/8890818

Bill “Blackout Bill” Shorten

http://www.heraldsun.com.au/blogs/andrew-bolt/column–how-could-bill-shorten-say-such-mad-things-about-global-warming/news-story/6a6952c8d6ccc707b76b75f9789c0d6b

How do you prove it? Watch their lips.

31

I would have thought, to be consistent, the standard should be a sensor made to specifications so it behaves like a standard LIG instrument. I imagine such a sensor would perhaps look like something encased in glass or perhaps a LIG instrument read electronically every few minutes with low power input.

40

RobK:

There are problems with LIG thermometers as well. As they age readings can change through hardening of the glass. The readings from one thermometer to the next can vary. Modern thermometers are ‘calibrated’ to a high and low temperature e.g. 0℃ and 100℃ for a common mercury -10℃ to 110℃ thermometer. The scale is then a linear marking, but the bore can vary so intermediate readings may be in error. Two boxes (20 total) checked at 25℃ had readings ranging from 23.4 to 25.2℃. Most were close to 25℃ but any claim that they were exact was nonsense ( 1 of the 20 only read 25.0℃). They were checked as full immersion in a water bath, not in air which introduces another possible error.

There are calibrated LIG thermometers which come with a table of measured error readings at various intermediate temperatures, but these are expensive, have thinner bores for more accurate readings (hence longer, less easily handled) but don’t last for more than a few years unless recalibrated. I doubt that the Public Service purchasing Department bought any. I also read that the BoM took these ‘off the shelf type’ thermometers and used them without checking. If so, further proof of incompetence.

121

I have a set of NIST calibrated glass thermometers (true!) and they each have calibration info as you mention and also a schedule for re-calibrating. According to the sheet they are 26 years overdue (also true!).

30

Very good information clearly ignored by our BOM. You would think their full time 350 scientists might have made these points instead of producing glossy brochures on Climate Change.

So 0.5C is a reasonable variation for the bulk of historical records without any real change in regional temperature This questions the fundamental claim of century warming in the first place, not just the elimination, discarding, ignoring, homogenization, area averaging, massive interpolation and extrapolation of data and of course the alterative of source data.

Then there is the question of relative from a 1910 thermometer to a modern one plus the connection of a modern thermometer to an electronic one. Resolution is not accuracy and increasing resolution raises more serious questions about older records and absolute accuracy. Considering the entire $1,500,000,000,000 a year is being spent to prevent alleged shifts in temperature of 0.8C from 1900 and 0.0C in the last twenty years, you could be forgiven for thinking Global Warming was all made up by the UN committee for Climate Change. That is before you even get to the disproven hypotheses of CO2 driven warming and man made CO2 change.

20

Alternative –> Alteration. No data should be changed! It should be recorded and kept for all time without alteration.

To change raw data is to commit a great sin in science. It used to be kept longhand in ink, never to be altered.

Change interpretation by all means, but never alter data. I learned this in my first experiment. It remains an absolute law of real science. To read of routine alteration of data as a common practice in the BOM is beyond belief. That is like fiddling the books to balance the ledger. It should be criminal and at the very least unethical.

20

In fact the department head would not even accept records in biro. Everything had to logged carefully with a fountain pen, if quills were not available. The passion for accurate, legible and precise measurement was part of the science culture for all time. To read of people casually lopping off 0.4C is a new world of carelessness abhorrent to real scientists.

20

UAH for Australia over the last 20 years

https://s19.postimg.org/t849zahv7/Australia_20_years.png

Essentially NO WARMING !!!

103

AndyG55 what is the source of your graph? And is this UAH V6 data?

20

http://www.nsstc.uah.edu/data/msu/v6.0/tlt/uahncdc_lt_6.0.txt

12

Neville what is Lomborg’s view of the hiatus?

10

It looks like the start of Beethoven’s Fifth to me. Are you sure that it is not a snapshot from a oscilloscope? 😉

40

With one ‘thumbs down’ vote it looks like someone thinks that’s bad news.

50

It was hard to concentrate on the temperature graph with all the surrounding distractions!

20

Here we go again. BOM, BOM, BOM, BOM ….. Could BOM bashing become a new national passtime? (an maybe give the Pomms a rest).

311

Did you miss the admission from BoM management in 2014/15 that weather and climate change media releases that did not match BoM historic data records was due to errors and omissions? After the Minister responsible asked for an explanation.

Or that more recently BoM claimed that weather stations were faulty when it was pointed out that temperature reporting was being reported slightly higher than actual readings, obviously to create a long term upward trend?

121

Yep. I don’t see a problem in that.

News headline,

“BoM bashing bankrupts a broken bureaucracy”

120

When a failed and , by its own admission , error strewn organisation hides facts to protect itself then the only recourse is to bash it into honesty.

When a failed prophesy has religious adherents (Watt et al) who try to deflect criticism from the ‘prophets of doom’ then you know that forlorn attempts are being made to hide falsehoods, and revelation is not far off.

71

What’s up with that, Watt.

https://wattsupwiththat.com/2017/09/11/ooops-australian-bom-climate-readings-may-be-invalid-due-to-lack-of-calibration/

30

Guess it was asking for that Red Thumb lashing. Well done to everyone who saw it as one in-ten-ded.

20

A one second wafting hot spike,

Gives a false average temperature hike,

Which for sensors is crude,

And with past records skewed,

Are the methods the B.O.M. like.

190

First we were presented with tree ring data which purported to show past temperatures for our Earth.

Maybe it did give some sort of indication, but with obvious issues with absolute values.

Then with the advent of alcohol and mercury in glass, there was an extraordinary jump in accuracy and reproducibility of recordings.

The fall of science began when these two data sets were joined seamlessly by politicians to “prove” that global warming was occurring.

We have here now a similar situation.

The liquid in glass thermometer readings cannot simply be tacked on to tree ring estimates and neither can the electronic instrument data be “tacked on to” the LIG data.

They are NOT equivalent.

It isn’t hard to estimate the response time of a mercury thermometer as being in the order of a minute when measuring air temperature. The response time of the new electronic instruments is probably a fraction of a second.

These data sets MUST be reported as separate assessments of air temperature and any “blending” of such data is clearly unscientific.

My doctor once used an LIG thermometer under the tongue. Remember how long that took to max out. Now he uses an electronic instrument that picks up body temperature through my earhole in a flash. The two readings are only equivalent if the electronic device is properly calibrated.

With the BOMs new devices I can see the possible need for calibration that works on a time weighted average that imitates the LIG response time to variations in air temperature.

The inclusion of obvious outliers in final data presentations is scandalous.

But of course this isn’t science, it’s politics.

KK

162

Every crooked step of the way. The trouble is that no one replaceable will admit to heading in the wrong direction so ‘the system’ grinds on toward self-destruction, the pre-ordained destiny orchestrated by the untouchables.

121

I thought tree rings had been debunked, in having a closer correlation with rainfall than temperature?

91

My apologies to Jo for not having read the whole post.

After reading Graeme’s comment at no 16 it was made clear that my assumption that meteorology did not consider heat transfer time was wrong.

Having gotten to the Maryborough graph which suggested that and obvious outlier was being included in data I went off.

The main point was that splicing data from two very different methods of retrieval would not be accurate nor “scientific”.

KK

120

Tree ring data and the ratio of C13 / C12 has been used to claim that the current atmospheric ratios of C13 / C12 ‘prove’ that a small increase in atmospheric CO2 levels Must be man made (from fossil fuels) rather than natural.

Inconveniently a new study by University of California San Diego led by Scripps Oceanic Institute has found that plants discriminate between the carbon isotopes, preferring one above the other.

What this means is that assessments of past atmospheric C12 / C13 ratios from tree ring samples are wholly unreliable. That means that claims, based on those and current ratios, that man is the dominant source of increased atmospheric CO2 are scientifically meaningless.

It means that no ‘anthropogenic footprint’ can be identified, and any claims that it can are fundamentally flawed.

90

Same applies to C13 / C12 ratios from ice cores.

50

HOw is there bias in an ice core?

51

It is not a Bias in the ice cores – it a flawed understanding of the C13 / C12 ratios found in CO2 trapped in ice cores.

The CO2 trapped in ice core samples is taken to represent the atmospheric ratios of C13 / C12 at the time it was trapped. What has not previously been understood is that plants are selective and C3 plants prefer (select) C13 over C12 thus decreasing the C13 in atmosphere. Ice cores from ice ages are from times when both plant growth and the area of the globe they could grow across was limited and thus less C13 was being removed from atmosphere and the C13 / C12 ratio showed a higher C13 concentration.

The study has concluded that plants preference for C13 distorts current C13 / C12 ratios in atmosphere and that distortion is a reduction in C13. Because that reduced C13 / C12 ratio is very similar to the ratio in fossil fuels that has led to claims that there is an anthropogenic footprint in today’s C13 / C12 ratios because there is Less C13 than in the past.

The current ratio is not a reliable method of ‘finding’ an anthropogenic footprint from fossil fuels.

31

How has this changed over time such that it has caused bias?

02

@ Gee Aye. Is stupidity a requirement for being a warmist alarmist ?

30

@Gee Aye – try reading and understanding what has been already explained. Why is it that you warmists have such difficulty with critical thinking and understanding simple facts ? Is it because facts fly in the face of your religion?

30

It’s good to hear that Roger.

The problem is that they will latch on to any concept no matter how tenuous and try to make it say “man made global warming”.

CO2 is the classic example whereby it was promoted as some sort of special heat trapping gas.

Unfortunately they have been allowed to get away for too long while the basic physics is ignored.

KK

71

We could sell our argument better by illustrating that nature produces 750 gigaton annually and humanity only 29 gigaton.

Its argued that only half of this industrial CO2 is absorbed by carbon sinks, while the other 50% builds up in the atmosphere and warms the planet. This increase in CO2 is remarkable but not significant, unless of course the hiatus goes on for another five years.

41

Another way to highlight CO2 in the atmosphere is to consider a football stadium with 10,000 people packed in. Adding just 2 more people is just like increasing CO2 in the atmosphere by 200 ppm. In terms of the crowd one would say that it is a negligible increase.

Why not the atmosphere?

Regards GeoffW

61

Gordo – couldn’t agree more and the other part of that is that the amount of CO2 produced by man is Less than the uncertainty error bars of the amount of natural CO2 emissions !

51

That is incorrect

03

You’ll have to come up with an alternative proposition, otherwise I’ll assume you are talking through your hat.

20

Gordo I think it goes something like this – is too , is not , is too , is not , is too , is not .

Which is the level of a five year old and not much of a sensible conversation but you have to remember he will have his hands over his ears and be chucking a wobbly at the same time which makes it hard for him to reply .

20

@ Gee Aye – why must you confine yourself to propaganda rather than facts that even the IpPCC accept? Is it because of your warmest religionists beliefs ? If so you have more in common with ISIS than with normal human beings.

30

Roger, the relative plant uptake of chemically identical but slightly heavier C13 (1%) against C12 (99%) is arguable. However as I have written many time, the proportion of C14 (half life 5400 years) is not debatable. As C14 does not exist in fossil fuels, you can absolutely measure the amount of fossil fuel CO2 in the air. Around 1954 when radio carbon dating was invented, it was noted that the amount of fossil fuel CO2 was under 2%. This was the Suess effect (despite the later WIkipedia editing to make out it is C13/C12)

After the aerial atom bomb tests after 1965, we even have the relaxation time for C14 based CO2 as 14 years by actual measurement.

So we know absolutely that the 50% increase in CO2 since 1900 is NOT man made. We know absolutely that CO2 vanished into the huge oceans, half every 14 years. We know CO2 levels are set by ocean temperature and Henry’s law. There is no need to go to ice cores to create conjecture based on plant absorption. However as real factual science is ignored to feed the alarmist fantasy and the Green industrial machine, we may as well examine they hypothesis that increased CO2 increases planet temperature. Also not true.

20

Australian BOM temperature data; one word comes to mind- INCOMPETENCE. And that is being generous!

GeoffW

132

G’day Geoff,

The BoM/Weatherzone have given me a local record. An increase by a full degree centigrade in observed (by me, so ultra reliable) error in the actual v forecast minimum temp at Mudgee airport. That error is now at plus or minus 5 (5.00 to emphasise the position of the decimal point) degrees – yesterday’s forecast = 10C, today’s actual min = 5C.

http://www.weatherzone.com.au/nsw/central-tablelands/mudgee

The link will only show this difference today, and may have already been updated beyond verificaction.

The previous record of plus or minus 4, lasted for several weeks.

Cheers,

Dave B

PS Rather off topic, but I needed to record this discrepancy. D

31

Well, in a peculiar way I hope you’re right G W.

If it’s not incompetence then it’s manipulation. And, to me, it has that smell about it. It’s the smell of infiltration and insurgency by the disciples of Antonio Gramsci. The green/left totalitarians.

Either way the organisation is long over-due for an efficiency and effectiveness audit by the Auditor-General and an independent external body. Perhaps a Royal Commission is going too far at this stage, but then, given the cost to the nation of the policy stupidity that flows from the BoM’s work, perhaps not.

A total re-organisation of staffing, structure and legislative framework is needed. It’s current set-up is not fit for purpose.

10

Its.

11

On this occasion, maybe the BOM is OK. Given the tech of Pt resistance thermometers it certainly would be possible to design one that has the same dynamics as a LIG thermometer. Question is, did they do this right? There is also the question of electronic interference in the A/D conversion. I would still be looking for outliers myself. So IMO the broader question is not the point readings but why are there observed outliers if the dynamics of the thermometers are right. This for example might suggest a poor observation environment. The other question then is if the thermometers are dynamically similar to LIG devices then why sample them so quickly when they can’t respond that fast anyway? Given the BOM here is suggesting they have slow responding devices, there can also be little harm in averaging them. Interestingly, outliers (noise) would indicate faulty hardware in this case. I wonder if the outlier data is used to identify faulty devices?

71

You could be onto something, what if the BOM received a batch of Thermistors instead of Thermometers?, going by their abilities with math spelling won’t any better.

82

What is interesting is how can this play into government policy. It’s clear that AGL won’t play ball in keeping Liddel going and high electricity prices and intermittence is our future. The only solution is to withdraw from Paris and remove the RET. An audit of BOM would give the government an excuse to suspend the RET whilst it happens and when ( as I am sure will be the case) the BOM ‘s methodology has created a warming bias the government has the excuse to back down from its support of a RET. Not only would this save Australia in terms of energy policy it may save this government from certain defeat. It is time that governments decided to set the excuse framework to get out of the Paris agreement rather than totally stuff the Australian economy so that we plunge down the slippery slope of lower personal wealth and diminished economic growth.

151

For years politicians bragged that Australia is one of very few signatories to the Kyoto Agreement that has achieved all greenhouse gas emissions targets established at the conference. It has been achieved by direct action that included policies such as the once off trial incentive to try RET of 2 per cent. But later Labor raised it to 22 per cent which former Coalition Government PM Howard recently said should not have been done. He also expressed concern over the increasing energy crisis.

Now our coal and gas is being exported to provide low cost electricity in other countries, many of them have not achieved Kyoto targets.

This situation highlights the poor quality of our present political class.

100

The only reason Australia achieved it’s Kyoto target was by DCC&E’s fiddling with CO2 numbers, just like BOM’s fiddling with temperatures.

DCC&E had measured quantities of CO2 from energy, transport, industry and the like based on ABS data which showed an increase of around 130 Mtpa.

DCC&E ESTIMATED CO2 savings from land use and forestry at a bit less than the 130 Mtpa required to get below the 8% increase required by Kyoto. Under UN rules estimates were allowed for LU&F calculations.

Using an Excel spreadsheet DCC&E applied factors to changed definitions, not physical changes, IE. forests to national parks, farmland to grassland etc. and guess what – Australia achieved it’s Kyoto target.

I queried DCC&E’s processes on numerous occasions and got the usual over the top bureaucratic responses, just like BOM.

While Tony Abbott canned the DCC&E, many of it’s high priced help just rolled over into other similarly useless Green driven bureaucracies.

13

Referring to the table above for the response time of LIG thermometers from 1912 I point out that it could read in air

Air speed__________Response time

3.6 km/h__________58 seconds

18 km/h___________33 seconds

36 km/h___________25 seconds

What chance is there that the wind speed inside a Stevenson is high enough to give a response time less than 30 seconds?

If the BoM were averaging readings from the electronic meters to try and match LIG readings then 1 minute averaging would be used.

The old saw “If the only tool you have is a hammer, then everything looks like a nail” applies. The BoM is trying to find proof that Australia has been warming and has used the old slow response readings to compare with the fast response modern ones. Any noise giving higher readings will be welcomed as “proof” that there is warming, and their jobs are safe and even better, they are “saving the world”, so any doubts would be suppressed internally and, as best they can, externally. Hence discarding of any contrary readings and acceptance of obviously faulty ones.

There is also a ‘fault line’ between those concerned with forecasting the weather in the next few days (the traditionalists) and those concerned only with generating political pressure. The numbers of the latter group are obviously in control and forcing the evidence to conform to their wishes. Time for a very thorough clean out.

131

Some more on Australian temperatures

https://realclimatescience.com/2017/09/plummeting-temperatures-in-australia/#comments

and comments

20

I’m wondering if there’s much point pursuing the BOM on this. If you look at the Meteorology Act 1955 it is quite clear that their function is broadly about forecasting weather and has no role in analysing climate. So if a bunch of meteorologists want to poison the long-term record by changing instruments with no cross-calibration, that seems to be their prerogative.

What we need to be on the lookout for is anybody who uses the poisoned data.

What the BOM really should be taken to task for is any pronouncements they make on “hottest year ever”. That is obviously not meteorolgy and they have no standing to say such a thing.

And if they ever want to start a BOC, instrumentation should be pretty easy. Simply plant bristlecone pines next to every Stevenson screen — or just one for the whole country might suffice — and take a core every century or so. Then they’ll be able to tell us about “hottest year ever”.

130

*chuckle*

The link between BoM and the MSM needs analysing because propaganda is all about half truths, or mixing truth with lies.

‘Andrew Watkins, manager of extended and long-range forecasts at the Bureau of Meteorology, said the real reason for the warmth was the persistent high pressure seen particularly during early winter.

“But you also have to add to that the long-term warming trend,” he said.

ABC

41

The persistent high pressure is a natural situation every winter. It gives us persistently strong Easterlies in the tropics every winter!

52

Tom the Subtropical Ridge has been travelling too far south in the Austral winter.

The Klimatariat say its because of industrial CO2 molecules, but I beg to differ.

51

I’m a little confused , BOM call the measuring device a ” platinum resistance thermistor ” and then later refer to it as a “platinum resistance thermometer ” , some in the know are calling BS to the former saying they don’t exist .

But the latter apparently generates heat while operating which if true is of concern surely, BOM’s latest statement refuting the claims of Jennifer Morahasy looks like the ramblings of someone with something to hide and little knowledge of the subject at hand .

91

Electronic circuitry using thermistors suffer from `bounce.’ Any change in whatever the circuit is doing causes thermal feedback as well as electrical. It doesn’t matter how the thermistor is being used: as a direct sensor for measuring temperature or for thermal stabilisation such as amplitude stabilisation of an oscillater, it suffers from several seconds of instablility after any change or adjustment while the thermistor reaches a new thermal equilibrium. Several seconds.

I usually modified such designs to render the thermistor unnecessary, they were far too sensitive.; photo-electrical feedback was much better.

If the BOM is using thermistor based devices then any eddy in the air is a disturbance which will cause measurable instability. Even a ten second averaging interval is too-short a time. The US `standard’ of 5 minutes is more like it. One company I worked for used silicon transistors as CCD’s (Constant Current Devices) as the temperature sensing element for data-loggers it made. Temperature change created a small current change. These were vastly better behaved than any thermistor, very stable and surprisingly linear.

80

I’ve never used a platinum Resistance Temperature Detector (RTD) in my career. It’s time, maybe, that I did. So I’ve done a bit of research into them to fill myself in on them. They’re quite affordable with a typical price of c. USD95.00 and up depending on your application and requirements. They are NOT thermistors except in the broadest of terms.

There are basically four types of industrial temperature probes which suit electronic measurement:

0. A silicon transistor: as a constant current device. It has limited range but is reasonably linear but not over the whole range. It’s not bad for accuracy. It tends to drift quite strongly without compensation, so it’s not a suitable sensor for applications where better accuracy is required.

1. Thermistor: (from Thermal Resistor) are resistive devices made out of metal oxides usually formed into a bead and encapsulated in epoxy or in a glass envelope. Thermistors generally have a negative temperature coefficient which means the resistance decreases as temperature increases. They are very sensitive, and are typically non-linear with a limited temperature range.

Thermistor temperature-sensing probes are considerably less expensive than those using RTD elements. High resistance equates to high sensitivity with a thermistor providing a larger voltage drop over a specific temperature using the same measuring current. Self heating is more problematic with a thermistor because of its small size. This gives rise to “bounce” with a step change and a longish settling time. Thermistors are best suited for low cost applications over limited temperature ranges. Can be used to thermally stabilise a transistor CCC probe’s drift, but life gets quite complicated here with calibration.

3. Thermocouple: is made of two dissimilar metals welded together. The reference junction is typically part of the instrumentation and the opposite junction is known as the sensing junction. When the sensing junction is heated, the opposing metals create a known thermoelectric effect proportional to the temperature difference (ΔT) between the two junctions. By compensating for the known temperature of the reference junction, the temperature at the sensing junction can be determined. Different types provide differing thermoelectric effects for differing temperatures and environments.

Extremely high temperature range Thermocouples can be rated as high as 1700&Deg;C. Their simplicity enables them to withstand extreme shock and vibration. Small size/fast response Thermocouples with exposed or grounded junctions offer nearly immediate response to temperature changes. Thermocouples are best suited to high temperatures and extreme environments.

4. Resistance Thermal Detectors: usually made from platinum. Can be wire wound around a ceramic or glass core or cemented to a ceramic substrate. The unit is usually encapsulated in various materials depending on application. Air temperature sensing devices are not encapsulated (open) or only lightly so (encapsulation determines response time for ΔT). Platinum RTDs have a positive temperature coefficient with resistance increasing linearly for increase of temperature. Measurement range can be -200°C to 600°C. This is probably the most commonly used sensor.

Each type of temperature sensor is best suited for a particular range of temperatures.

The Platinum RTDs have some significant advantages:

• A wide temperature range (approximately -200 to 650°C + )

• Good accuracy over the wide range (better than thermocouples)

• Good interchangeability (highly standardised)

• Excellent long-term stability (well, platinum is a “noble” metal …)

• Simple calibration

RTDs can be used in all but the highest-temperature industrial processes and can be made from materials including, but not limited to, Copper, Nickel and Platinum. They all measure temperature through a change in electrical resistance of a length of wire per change of temperature. Platinum (Pt) is by far the most common material used for RTDs. Pt RTDs are very stable, as they are not affected by corrosion nor oxidation. Copper has high temperature limitations: it has a tendency to melt, and metals other than platinum are not as stable nor as repeatable.

Pt RTDs are highly standardised and are available in a range of accuracies. S EN 60751 (British Standard), and European DIN/IEC751, which control tolerances and accuracy. There’s a nice table here.

There are RTDs available for almost any application requiring accurate and consistent temperature measurement.

I haven’t found much about thermal response times, the best I could discover being from 2 seconds to 65 seconds. That would depend on the probe encapsulation material and amount. Everything else waxes voluminously about stability and linearity. Calibration is easy: use a jar of slushy ice (not too much water, the ice shouldn’t be floating!) to set the 0° point. The probe’s resistance will be 100Ω at this point.

Some temperature measurement guidelines for those who would like to make their own thermometers using an RTD. If the BOM’s readings are so poor, they must be applying these devices incorrectly or doing something badly wrong.

Hope this is informative for everyone.

20

Yeah, I know, USD 95.00 for a thermometer. Well, it’s not gold plated, it’s platinum :-).

The very best.

20

This is when the rot set in.

http://www.bom.gov.au/water/news/article.php?id=63

21

The BOM will not release its data or the algorithms that they process it with as they know that it would alert the Public and Sceptical Scientists to the magnitude of the [snip] that they are perpetrating in the name of CAGW theory and Global Warming Alarmism. The BOM knows that what they are doing is scientifically illegitimate and a misuse of taxpayers’ money.

42

I’ve just been monitoring the early morning temperatures online, in my home town. The BOM weather station is near a cluster of buildings, a no through road and a car park. Some vehicles start to arrive at the car park in the early am.

This morning I observed the following: O550 hrs 2.9C 0555 3.1C 0600 2.6C 0612 2.8C 0630 2.7C. wouldn’t guarantee that my clock is in sync with the BOM’s clock.

A 0.5C fall in approx 5 minutes following a 0.2 rise in approx 5 minutes. I wish I had started monitoring a bit earlier. I wonder how much effect arriving vehicles had on the measurements, or temperature pulse from UHI and wind swirls.

72

A bit of car exhaust passing near the BOM recording device could easily affect the daily minimum temperature which typically occurs about 5:30 – 6am.

Can you advise where your BOM weather station is?

21

We moved to town and ADSL in late 2009. i use eldersweather’s site, which I believe comes from the BOM. The local airport station was established about 1991. Now, from memory:

The 24 hr report developed from erratic half hour reporting plus “spikes”, (of temp, wind, rain) and now ten minute reporting. Along the way we saw Ken Stewart publish an analysis, a sound analysis, which brought BOM activities to notice.

I am pretty sure that it was after that that we started seeing strange reports, including multiple reports on the hour which were not always the same. The cynic in me wondered if this was to make it very difficult for freelancers like Ken to make an analysis of the figures. Perhaps a decoding algorithm was needed.

Lately we have a well organised report based on ten minute reporting, but it is noticeable that the reported maxima and minima often do not appear in the ten minute reports, outside by a significant margin. It seems to me that the one second rule explains that.

102

Statistics are worse than meaningless unless they are in the hands of informed, conservative and skeptical humans endowed with common sense. Eg: A “record” hot day for August achieved on August 31 or a “record” cool day for August achieved on August 1. If you don’t immediately question the cause and hang-time of the high or low temp and if you don’t immediately notice the difference/distortion between a mid-August reading and an end of August reading and if you don’t wonder immediately about differences in measurement, UHI etc, then you may be highly qualified…but you are not educated.