|

|

||||

Wild Experiments? Alice Springs fossil fuel grid becomes too unstable with more than 13% solar powerPonder how destructive solar power is: It only takes 13% solar to push a small grid to the edgeA little bit of solar power causes mayhem on a perfectly good grid.  NT Electricity Grid Map (Click to enlarge) Darwin is 1,300 km or 800 miles in a straight line from Alice Springs. The Renewable Crash Test Dummy Country is aiming to be using 82% renewable electricity by 2030, but instead of making sure this works on a small scale at any one of our remote microgrid locations, where electricity is expensive to start with, we thought we’d do the experiment on the whole nation instead. So it is “sobering” to see how this fails at Alice Springs. If there was a place on Earth that is well suited to wind and solar power, it surely is Alice Springs which is 1,200 kilometers from the Northern Territory’s main electricity grid. Surrounded by a million square kilometers of largely uninhabited arid land, if we can’t plaster enough solar panels and windmills here to support a town of 25,000 people with no heavy industry to speak of, where can we? Yet the bare truth is that solar energy provides just 13% of Alice Springs annual electricity, and fossil fuel based generation, they admit quietly near the bottom of an FAQ page, is, shhh, 87%. Only one in four houses has solar power, yet the grid is already overloaded when it peaks, and unstable when a cloud comes over and the whole towns solar power goes down. (As it did in 2019 leaving many homes blacked out, as the engineers predicted would happen). So the “news” that the ABC reports is that someone has a plan to push Alice Springs from 13% to 50% renewable energy by 2030. Despite this daunting task, ponder that the ABC is excited today that one small, extremely sunny isolated location might, in our wildest dreams, manage to achieve a bit more than half the target we set for the whole nation by 2030, but only if we spend $150 million (and over 20 years?). This is called “Leading the Country” — where they fail to meet the targets before everyone else does… When Victoria Ellis says “energy” below she means electricity.* Alice Springs can be powered by 50 per cent renewable energy in six years, report showsBy Victoria Ellis, ABC “News” According to the Alice Springs Future Grid website, about a quarter of Alice Springs homes have solar panels, and over a year about 13 per cent of the town’s energy comes from solar, but if more solar energy is added without further planning, the small electricity grid could become unstable. The Alice Springs Future Grid website says if solar panels across the town are generating a lot of power in the middle of the day and a cloud bank suddenly shadows them, their electricity production may drop more quickly than an alternative power source can be drawn upon, leading to a blackout. Alice Springs Future Grid director Lyndon Freason said in today’s system, there is sometimes lots of solar being produced that is not used by the grid. “It’s becoming increasingly difficult to efficiently absorb more renewables in the middle of the day when the sun is shining, without actually causing instability in the existing generation,” he said. So the only problem with solar power is that there is either too much electricity or not enough, or it disappears when the clouds come over, needs constant back up, and it can’t stabilize the frequency, right? And then there is the duck curve. Look at the ramping rate required from 4 to 6pm: The belly of the duck is the fall in the need for electricity at noon as solar peaks. The belly can’t be allowed to sink too far, because the gas/diesel power plant needs to keep running to keep the frequency stable. The “Roadmap” such as it is, is four scenarios with a bit more-or-less of this and that: Click to enlarge (From the PDF) The costs, the costs: I don’t know if anyone has mentioned that 2030 is not 20 years away: Mr Cocking [CEO of Desert Knowledge Australia] estimated implementing one of the scenarios would cost about $150 million over 20 years. “Ideally it would come from government, but most likely as well some private investment,” he said. More detailed estimates (page 63) suggest the costs could be as high as $216 million, but there will theoretically be about $50 million in fuel savings. It still works out as $6,000 per man, woman and child — which no one has to spend at all — because they just built the current diesel-gas power plant there in 2011 and upgraded it in 2018 at a cost of $75 million. Should we ask the people of Alice Springs if they’d rather have the money? For a family of four that’s $24,000. Might be nice? But as a microcosm of a national transition the Alice Springs mini grid speaks volumes about how absurd the whole crusade is. Currently when the wind and sun are asleep the town gets electricity from the Owen Springs Power Station which is a gas/diesel plant that can provide 80 megawatts whenever they need it. There is also an old power station built in 1973 that is still operational and a 5MW Battery Energy Storage System (BESS). Proportional to the size of the Alice Springs grid, it is (or was) effectively the “biggest battery” in Australia when it was installed in 2018. It is used mainly for stability and emergency power when the clouds roll over so the gas plant can ramp up. If it were to be used mostly for storage, the $8 million battery would only last about 20 minutes, or maybe 40, tops. This doesn’t scale well to a nation of 27 million people. For the record, a mini-Snowy 2.0 scheme is not possible in Alice Springs. There are some worthy hilly areas for sure, but annual total rainfall is barely 280mm or 11 inches, and quite random. Don’t wash those solar panels? Curiously, the tap water in Alice Springs is worse for the solar panels than the dust is: Detailed studies have been conducted on this subject, concluding that dust does not have a significant impact on PV systems. This is perhaps surprising, but washing the panels with tap water in places where there is a high concentration of calcium (such as Alice Springs) can actually have a more negative effect than dust. The arrays at the DKA Solar Centre are washed once a year by a specialised company who use a reverse osmosis filtration system to treat their water before using it to wash the solar modules. ( from the FAQ.) Those key statistics from the FAQ of the “Alice Springs Future Grid” (which is the current grid) In the 2021‑22 reporting period, total conventional generation capacity was 122.6 MW and operational maximum demand was 48.6 MW, not including requirements for system redundancy. It is noteworthy, however, that while the Ron Goodin power station is aged, it remains available for system redundancy. No definitive retirement date has been announced. Over recent years, more than 25% of the approximately 9,000 households in Alice Springs have installed DPV on their property rooftops. The maximum output capacity of all residential DPV systems in Alice Springs is estimated to be 23 MW, and historical generation data suggests in the order of a 9% contribution to overall consumption. Fossil fuel-based generation produced 87% of annual volume and centralised Renewable Generation produced 4%. See the FAQ for more. *Obviously solar power is not providing 13% of the towns energy – which also includes petrol, oil and gas.

Chronicling the collapse of the Big-Government-made EV bubbleIn today’s EV obituary column, Elon Musk has dropped a bombshell. Two months after Telsa chargers became the industry standard (which promised to save the other car makers) his profits fell, and he’s fired the entire EV charging team overnight. Hertz, meanwhile, has realized that dumping 20,000 electric cars in January was not enough, and it has to offload another 10,000 electric cars, which now amounts to half its EV fleet. And then comes the news that there might be a secondhand “timebomb” coming at the eight year mark when most EV battery warranties run out and cars will become “impossible to sell”. As if that’s not enough, this week the fire and rescue experts in NSW are warning in the politest possible way, that they might have to do a “tactical disengagement” of a car accident victim, which means leaving them to die in an EV fire if the battery looks likely to explode. They say that first responders need more training, as if this can be solved with a certificate, but the dark truth is that they’re talking about training the firemen and the truck drivers to recognize when they have to abandon the rescue. EV crash victims could be left to die in battery fires without training for responders, inquiry toldJennifer Dudley-Nicholson, The Driven The NSW government’s Electric and Hybrid Vehicle Batteries Inquiry heard testimony from fire and rescue services, paramedics, the Motor Traders’ Association, and TAFE on Tuesday in its second public hearing. In the most serious incidents, firefighters said crews could be forced to abandon rescues or crudely rescue passengers from vehicles, and were being left “flying blind” at battery fires. VRA Rescue NSW Commissioner Brenton Charlton told the inquiry worse outcomes were also possible, including battery explosions in which a rescue operation would put emergency workers at risk. “We need to prepare ourselves and our volunteers… for the point in time we have to do a tactical disengagement, meaning if someone’s trapped and it does high order (explode) you might not be able to do anything,” he said. “That will be a tragic, horrible thing to take part in.” Just like that, Elon Musk fired the whole Tesla EV charging team, throwing the industry into chaos because Tesla network is the best by far and the bedrock of the so called “transition”. Tesla had agreed to open its charging network to other EV makers only recently, and Joe Biden was delighted. Tesla is getting subsidies to expand it’s North American Charging Standard (NACS) system. But this move has shaken the whole industry. Tesla layoffs shake confidence in the EV-charging futureBy David Ferris, E&E News In a single stroke, CEO Elon Musk called his company’s vaunted charging reliability into question when he laid off most or all of Tesla’s Supercharger team, the people who made Tesla the envy of the EV industry. The network they built is bigger, faster, smarter and more reliable than any other company’s — and has become the linchpin of the auto industry’s plan to persuade millions of Americans to buy EVs and turn the tide on climate change. “It feels like the rug just got pulled out from under a lot of the industry alignment that has been built in the last 12 months,” said Matt Teske, an industry veteran and CEO of Chargeway, an EV-charging software platform. “And leaves us on shaky ground.” In 2022, as traditional automakers finally started delivering a substantial number of EVs to the roadways, they ran into a problem. Their drivers couldn’t use Tesla’s chargers, because they were meant only for Teslas. And the public networks had an array of reliability problems. Ford was the first automaker to hit on the solution. Last spring, it struck a deal with Tesla to use its 12,000 U.S. charging stations and committed to building Tesla’s charging technology called the North American Charging Standard, or NACS, into its future vehicles. Other automakers followed suit in short order. By February, Tesla’s NACS had become the industry standard, with virtually every automaker planning to redesign their charging systems to meet Tesla’s specifications. UPDATE: Elon explains it’s just a change of pace: Tesla still plans to grow the Supercharger network, just at a slower pace for new locations and more focus on 100% uptime and expansion of existing locations And the crowd is baffled. People are mostly aghast… The Hertz debacle truly might become an obituary

Not surprisingly, it’s a financial ruin. Hertz shares have lost three quarters of their value since 2022. The company was an $11 billion dollar company in 2021 when it announced the mass EV purchases — it is now a $1.4 billion dollar company. Hertz drops more EVsRENTAL car giant Hertz, has announced that it is selling off 10,000 more EVs than it planned in January when it set out to stem the tide of massive depreciation that hit its fleet of 60,000 EVs. Rental car companies need to be able to sell off their ex-rentals for a reasonable sum, but just as Hertz realized it couldn’t afford to repair these cars, it also realized it couldn’t sell them either: …the program came under stress when Tesla began discounting its cars last year. This set off a tsunami of depreciation for existing Tesla owners; including Hertz, which had the biggest exposure of anyone. In turn, other EV makers followed suit with discounts and retained values for all EVs became a race to the bottom. And as word spreads, possibly no one else will be able to sell them either. What is an 8 year old EV with no battery warranty worth? It’s pot luck whether it will keep going or suddenly need a £15,000 repair… The used electric car timebomb – EVs could become impossible to sell on because battery guarantees won’t last – find out if you are affectedMoney Mail can today reveal a timebomb looming in the second-hand market for electric vehicles (EVs). Our investigation found that many EVs could become almost impossible to resell because of their limited battery life. Experts said that the average EV battery guarantee lasts just eight years. After this time, the battery may lose power more quickly and so reduce mileage between charges. In some cases, the cost of a replacement battery is as much as £40,000. For certain EVs, the cost of replacing the battery could be ten times the value of the vehicle itself on the second-hand market. Yet geniuses in government still want to push us all into EV’s. With uncanny timing the Australian Albanese government is about to launch emissions standards we don’t need to force people to buy a product they don’t want, in the hope, so they say, of stopping some storms. The insanity would be hard to fathom if EV’s weren’t also the ideal tool for spying, data collection, law enforcement, and political control. Benefits that can launch a thousand political careers… Thanks to Paul Homewood of Notalotofpeopleknowthat

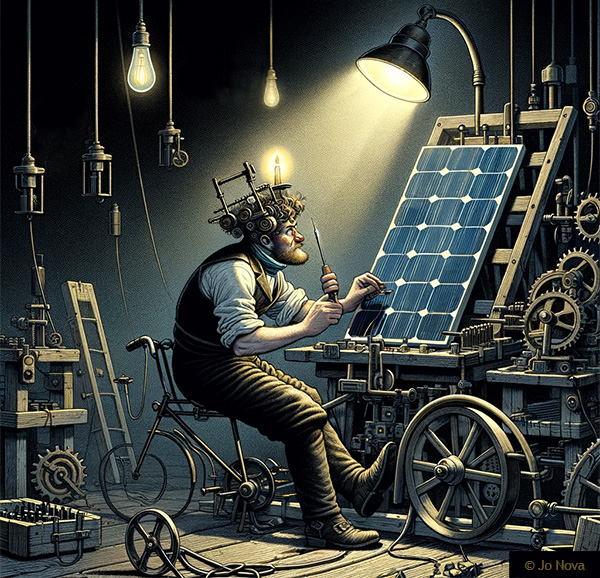

By Jo Nova About 90% of solar panels installed in Germany come from China, and earlier this year one of the last solar panel manufacturers closed in Germany. Last week, what was left of the industry begged for mercy (and subsidies) which they didn’t get. Now another German solar panel manufacturer has closed down. For some cruel reason German factories which are close to their customers, can’t compete with distant foreign factories which have access to slave labor, fossil fueled shipping and cheap coal fired electricity? The bigger question, seemingly, is how did the country that invented the printing press, diesel engines, and the theory-of-relativity get fooled by such a stupid ploy? Someone told them they could save the world with unreliable energy, so they converted their generators to unreliable ones, only to discover that they can’t afford to use unreliable generators to make the unreliable generators they need to keep saving the world? The only government stupider than Germany is the one that has already seen how badly this worked out and announces they’re going to do the same thing. Australia is not only ten years too late, but China has flooded the market to the point where people are using solar panels as garden fences, and we have our own glut of solar power at midday. The last hope of the German solar industry was a government mandated “bonus” for people who bought German solar panels. April 23rd: German solar industry warns “last chance” for sector’s renaissance could be missedCarsten Körnig, head of solar power lobby group BSW, …added that the solar industry was disappointed by the decision to leave out a “resilience bonus” for installations made in Europe. Given the stiff competition between producers in the U.S. and Asia for securing a share of the market in solar panel production, Körnig said including the bonus in the package would allow Germany to achieve greater supply security for the important future technology, adding that it is “perhaps the last chance for a renaissance of Germany’s solar industry”. April 30: Solarwatt becomes second solar PV producer to halt production in Germany in 2024Carolina Kyllmann CleanEnergyWire Solar panel manufacturer Solarwatt is set to halt production of solar photovoltaic (PV) modules in its factory in Dresden, business daily Handelsblatt reported. “Under the current circumstances, running a production facility here in Germany is extremely difficult economically, and we cannot justify this,” Solarwatt head Detlef Neuhaus told the newspaper. The plant with an annual production capacity of 300 megawatts (MW) will close “for the time being” at the end of August, with 190 jobs directly affected by the shutdown. China generates 60% of its electricity with coal, while Germany uses 32% coal, and 30% solar-and-wind power. What should Germany do, bring back coal, or get some slaves? Solar panels are now in the “top five” worst slave industries in the world, yet still barely any of the morality-police care. They’re apparently too busy atoning for slavery they didn’t cause that doesn’t exist anymore to worry about slaves that are alive today.

Image by Manuel Angel Egea Only 18 months ago the Australian government gave $14 million dollars to Andrew “Twiggy” Forrest to figure out if his team could build a 500MW electrolyser to make hydrogen gas on an island near Brisbane. It was going to be a glorious Australian green-techno future, the largest hydrogen plant in the world, but it’s missed three deadlines in the last three months to greenlight the project. Instead the Australian company is going overseas. As Nick Cater points out this part of the made-in-Australia renewable superpower is going to be made-in-Arizona because they still have cheap electricity — a miraculous 7.5c a kilowatt hour! Australia’s manufacturing decline is a story of broken promises and failed industry welfare programsBowen described the project’s success as “critical” to Australia’s ambition to be a green energy superpower. It turns out abundant sun was not such a competitive advantage in the manufacture of green hydrogen. Low taxes, fiscally responsible government and cheap and reliable carbon-free energy are far more appealing drawcards for investors. The future is already being built in Buckeye, Arizona, where Fortescue is investing $US500m ($765m) in a green hydrogen plant it says will be up and running by 2026. In 2023, manufacturing in Arizona grew faster than in any other state. It includes energy and water-intensive industries such as silicon chip manufacturing, with Arizona coming from nowhere to fourth place among US states. ..It isn’t hard to work out why. Arizona’s top state income tax rate is 2.98 per cent. … For energy-hungry industries such as hydrogen and the IT sector, however, the biggest attraction is the industrial electricity price: 7.47 cents a kWh in Arizona compared to 18 cents in California. Our electricity prices are twice as high as any hydrogen industry could bearWe are so far out of the running. Last month the boss of Fortescue Energy said he was hoping our prices would fall (by half!), and cites Norway as an example of “cheap renewable energy” as if we could emulate that. It’s a bit rich given that the only renewable energy Norway uses is hydropower (96%). Norway has 31GW of hydropower while we have 4GW and can’t even tack on a 2GW pumped hydro dessert. To put it bluntly, Norway has a thousand fjords and a half million lakes and Australia has no fjords and about fifty salt lakes. Fortescue says hydrogen hopes rest on a halving of power pricesBy Peter Kerr, Australian Financial Review, March 11 2024 Mr Hutchinson [Fortescue Energy boss] told The Australian Financial Review Business Summit that high power prices were the main impediment at Gibson Island. “We’ve been working very, very hard on it,” he said. “But it’s tough based on the current power prices when we’re looking at competing globally. It’s a tough decision. The company expects to approve a green hydrogen project in Norway this year which would be powered by carbon-free hydroelectricity. “If you look around the world where you can get cheap renewable power, competitive renewable power is below $US30 a megawatt hour,” he said.

Once upon a time, Australia had electricity so cheap no one would have bought hydrogen. Now electricity is so expensive, hydrogen might be competitive, except no one can afford to make it. Like the Penrose impossible triangle, just keep going left and it never makes sense. REFERENCE AER quarterly wholesale electricity prices 1999- 2023 By Jo Nova Remember when Ford was just losing $38,000 on every EV? Those were the good daysThe biggest star in the automotive world at the moment is a black hole, and it’s swallowing whole industrial giants. It’s hard to imagine a faster way to sabotage whole nations than to disguise your spies as academics and environmentalists. Then get them to convince the government to command a whole new market into existence in a highly technological field with the wave of a legislated wand. These numbers are astronomical: Ford just reported a massive loss on every electric vehicle it soldBy Chris Isodore, CNN: Ford’s electric vehicle unit reported that losses soared in the first quarter to $1.3 billion, or $132,000 for each of the 10,000 vehicles it sold in the first three months of the year, helping to drag down earnings for the company overall. Ford, like most automakers, has announced plans to shift from traditional gas-powered vehicles to EVs in coming years. But it is the only traditional automaker to break out results of its retail EV sales.

The EV unit at Ford sold 10,000 cars in the first quarter this year, which is 20% fewer cars than they did a year ago. It’s that bad. In fact it’s worse. Those cars were also discounted. So the revenue mostly went beyond the event horizon — and fell an astonishing 84%. Ford is expecting losses in the order of $5 billion for the full year. Their aims now are so low, they just hope one day to sell the cars for enough to cover the cost of making them, perhaps. They can’t hope to cover the millions spent on R&D in the foreseeable future. Indeed, even covering the costs in a market with a price war is said to be “very difficult”. Meanwhile, two days earlier the IEA chief said the EV revolution was rolling on just fine:CNN readers must be confused. The electric car revolution is on track, says IEA[CNN] Global electric vehicle sales are set to rise by more than a fifth to reach 17 million this year, powered by drivers in China, according to the International Energy Agency. In a report Tuesday, the IEA projected that “surging demand” for EVs over the next decade was set “to remake the global auto industry and significantly reduce oil consumption for road transport.” So the global EV market is being powered by “drivers in China”, like these drivers perhaps who left their brand new EV’s rotting in fields in China. That would be imaginary drivers? The propaganda never ends. As Stephen Wilmot said in The Wall Street Journal last year, if Ford just canned the EV unit, its adjusted operating profit would be 50% higher. Surely that beckons… Hat tip to Marc Morano @ClimateDepot

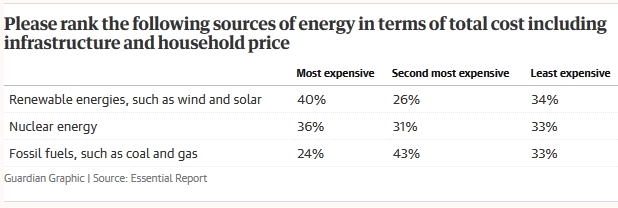

The sore losers of the renewable-fantasy hope you don’t expect them to apologizeWe are at the beginning of the big-flip. The activist pundits are suddenly realizing that renewables aren’t cheap and worse, that the public know it. Without blinking, they’re switching from telling us how cheap renewables are to saying of course, it’s going to be difficult, like everyone knows this and they haven’t been completely wrong for twenty years and wasted trillions of dollars. They hope of course to erase the past, skip the apology, and slide the public straight into acceptance — that the transition will cost more, of course. Take Peter Lewis, of Essential Polling. He writes snidely in The Guardian: Here’s the truth: energy transition is hard. Not everyone gets a ponyThe climate crisis has long been defined by its lies: From the original sin of science denial, to Tony Abbott’s confected carbon tax panic, to the latest yellowcake straw man. But the most damaging porky of all might be that the transition to renewable energy will be easy. Did you see what he did there? He blamed and named conservatives and then pretends they were the ones selling the lie that the transition would be easy? It’s writing like this that makes The Guardian the tabloid trash can of history. The most damaging porky may well be that wind and solar would be cheap, but it was a progressive fantasy and Mr Lewis himself was practically on the sales team. Pity he doesn’t have the honesty to admit it. Here’s the same Peter Lewis in 2017 — smug, wrong, and condescending to the end Clean energy is plummeting in cost, and the smart technology solutions that will make it work are proving themselves. The coal club can huff and puff but it’s too late to blow the renewable house down. The new reformed Peter Lewis now says the transition is “hugely disruptive”: Both gloss over the hard truth that fundamentally changing the way Australia produces, shares and uses energy is hugely disruptive, particularly in the regions where new infrastructure is earmarked for land and sea. Given Peter Lewis’s childishly patronizing attitude, and dishonesty, we have to wonder how biased are those “Essential Polls”? The reason he’s flipped is that the latest polls show most people don’t believe renewables are cheap anymore: And, as this week’s Guardian Essential Report shows, one of the fundamental building blocks driving this narrative is unstable: people don’t believe renewables are cheaper. When asked to rank energy sources in order of cost, renewables are rated the most expensive. Fossil fuels are seen as a cheaper solution, while nuclear is preferred by those who don’t support the transition anyway. Back in 2015 fully 47% of the voters, or almost half, thought renewables were the cheapest source of electricity, but that’s fallen to 34%. In 2015 only 20% of people thought fossil fuels were cheaper. Now 33% do. And 40% say renewables are the most expensive of all. Things are shifting fast — last October 28% of Australians thought fossil fuels were the most expensive, but six months later, that has fallen to 24%. So get ready to hear them say “we always knew it would be expensive”. It’s coming. They’re going to want to stuff “renewables are cheap” down the memory hole. Never let them forget. We need those grovelling apologies, and with letters of resignation. h.t to Ben Beatty @EnergyWrapAU

The system is reaching a crisis point and April is turning out to be the month of confessionsHis speech was the sound of an industry being tortured. The transition is going backwards. Big projects are stalled. Costs are rising and reliable old assets are being closed too quickly. It’s like we are disassembling the plane as we fly it… A couple of weeks ago in Australia the chief of Alinta Energy admitted in a big speech that the industry needs to be honest with the public about the costs of the transition. This marks a big shift from the “cheaper and cleaner” misinformation which the renewables industry was practically built on. Jeff Dimery had a stark warning — his company bought a large old coal plant in Victoria for a billion dollars in 2018, and it powers one fifth of Victoria. But to replace that today with renewables would cost $10 billion. But he also laid bare the crushing effect subsidized rooftop solar PV panels are having on the transition. No news outlets seemed to appreciate the implications of this. Fully one in three Australian homes now has solar panels, but they are all dumping power on the grid at the same time pushing wholesale prices into negative territory that burns the other generators. The midday solar glut, as he calls it, means no one wanted to invest in large scale renewables. But as night follows day, surely that which ruins the market for large scale renewables would also ruin it for large scale fossil fuel plants too? The subsidized solar panels are vandalizing the whole market. Skeptics who have been predicting this all along, note that the same people who cheered every time a coal plant was struck down are now wading through an impenetrable swamp of their own creation. The same subsidies that hurt coal and gas power, now wipe out the large (subsized) wind and solar plants too. It’s takes some chutzpah to complain about solar subsidies ruining the market for other generators which are also subsidized. Someone let a plague of solar-locusts on our grid. They eat the profits out of all the reliable providers, which close down. We are actively sabotaging the entire grid — killing off the parts that made it work. Australians to pay more for their energy as transition accelerates, Alinta Energy saysBy Colin Packham, The Australian Australians face higher bills as the country struggles to build adequate replacements for coal with soaring costs for new sources of green power and transmission, Alinta Energy chief executive Jeff Dimery has warned. The stark comments, described by the energy boss as “truths and straight talking” comes amid growing concern about the toll of Australia’s energy transition. Mr Dimery said Australian energy stakeholders must be honest with the public about the toll of the transition. Mr Dimery complains he can’t build anything profitable at $58 a megawatt hour. “When I sat down to write this speech, the future Victorian energy price for the 2026 calendar year was $58 a megawatt hour,” said Mr Dimery. “At $58, I can’t build anything to meaningfully prepare for coal to come out of the system. I can’t build more solar, because we already have a glut of solar in the middle of the day, which is sending spot prices deeply negative. If I was just looking at the forward price, I would also be very wary about building new wind, because the margins would be slim to non-existent, and any curtailment – which is a growing problem – could be disastrous.” If only the energy commentators of Australia could read the same AEMO reports that unpaid bloggers do? Then they’d know that this time last year old brown coal plants were still selling electricity for $15 a megawatt-hour. But Mr Dimery probably knows this, since he owns one of those coal plants, and he didn’t mention it either. (Perhaps he believes in the transition, or perhaps the Hong Kong owners of Alinta might not appreciate that?). He’s being more honest than the other energy chiefs, but Australians need the whole truth. Just what are we giving up by blowing up the old coal plants?

The renewables transition was like a gold rush, but quickly projects started to fail… as Big Government plans do:11 minutes: I also want to explain what’s caused the drop in investment for large scale renewables. … In the last 5 years the top three gentailers [combined generator-retailers] in this country have collectively written off in excess of $10 billion of shareholder funds. There’s a race to Net Zero but it’s supposed to be for emissions not for profit. In the early era of renewables Australia had the perfect investment climate for wind solar and pumped Hydro. It could have been seen as the gold rush for renewable generation and certainly we saw no shortage of companies trying to get a piece of the action, but very quickly projects started to fail, loss factors increased and investment cases started to crumble. With a lack of planning and proper infrastructure we quickly found the grid overwhelmed… Costs are rising rapidly: 15 minutes: Let me tell you why higher cost and uncertainty about recovering those costs that’s why in 2017 Alinta energy developed and built the first big battery in Australia for around $1.5 million per megawatt. Right now we’re building another one that will cost roughly $1.7 million per megawatt . In 2020 it cost around $850,000 to insure a gas fired power plant. Today it’s around $1.75 million — that’s up 40%. I shocked people when I spoke at a conference two years ago and said that it would cost $8 billion to hypothetically replace our Brown Coal fired power station which was acquired for $1.1 billion. Replacing it with pumped hydro and offshore wind today would now cost in excess of $10 billion. That’s up two billion in a mere two years. Developers rightly are afraid to lock in high costs in case they can’t be recovered. The Alinta chief admits his biggest problem is the glut of solar panels at middayEverything he says about the problems with massive solar input at lunchtime is true for all the original baseload generators on the grid too. In full honesty he would admit coal plants were not retired because solar and wind were cheap, they were driven out of the market by the subsidy on an essentially irrelevant unreliable form of extra generation that always turned up when we didn’t need it: 18 mins: Without the deployment of new private capital, state and Commonwealth balance sheets simply cannot carry the financial burden we have a glut of daytime rooftop solar energy at the same time that 95%of all large scale renewables are getting curtailed — basically switched Off in some hours of high rooftop solar. The percentage of all energy produced by large scale renewables that was curtailed increased from 10% in the last quarter of 2022 to 13% in the last quarter of 2023. Now you might think 3%, who cares? Well boards care, investors care, and developers care. No one wants to lose 13% of their output and no one dares think just how much more could be lost that could be the difference between profitable and unprofitable. In short, ladies and gentlemen, continued subsidies at one end of the market are driving higher uptake into a glut and undermining the economics of new and existing large scale renewables.

But let’s be real eh? We were happy to destroy the profitability of the old coal plants and the gas plants that taxpayers built, which is what all the subsidized intermittent players did. We’re only complaining now, Mr Dimery, because we care about “renewable” profits.

The solution to the solar panel glut is battery storage (What did I say? One reason for the EV mandate is so they can make you buy the backup battery to store the useless intermittent watts in?) Dimery doesn’t want to offend 3 million solar panel owners, but he is quietly saying “they must pay”: I know how much households love their solar and how important solar is to the transition, but as with any of the intermittent technologies on its own it has pluses and minuses. … the daytime production from rooftop solar needs to be stored and shifted to when it’s required. We’ll need household batteries but they’ll fill up quickly. We’ll need big batteries, and they’ll also fill up quickly too. EV’s will take time to build up to critical mass and for vehicle-to-home and vehicle-to-grid models to alleviate some of the imbalances of homeowners dominating solar and battery installations. We’re exploring other options too like inviting retail customers to be co-investors in wind farms and giving them a portion of the output offset against their bill as well as providing better insights about their appliances via itemized bills that show what’s being spent on heating and cooling and refrigeration. It’s a solar death spiralHe points out that solar PV owners themselves don’t care about the negative wholesale prices at lunchtime (but in a real market they would). Rooftop solar is contributing to low energy prices at various times in daylight hours but it isn’t affected by price signals in the same way large scale generators are. It’s a problem we need to solve. So we subsidize lunchtime solar we don’t need, which makes electricity more expensive for those without solar. Eventually everyone feels they have to buy solar panels, but at the same time the solar glut is driving out the generators we need the other 75% of the day. This is a spiral that only goes down. The end is coming. The subsidy game can’t go on forever. We need to put real prices on solar panels now, and if that stops all new solar being installed (except in remote locations) so be it. Then we then need to rejig the billing system so those who installed the panels aren’t being subsidized by those who couldn’t afford them. It will take years to unravel this mess. The land of truth is arriving at the magic renewable tree. Keep reading → Lest We Forget. By Jo Nova The term “Net Zero” has become a dirty wordIt’s a win. The climate wars will rage on, but the Net Zero spell isn’t working any more, so they will have to find a newer one without such a smell. The sacred propaganda term that was going to save all life on Earth until five minutes ago is more than just a worn out advertising slogan, the skeptics campaign against it has made it a toxic term. Like ESG, it’s become a liability. The people know “Net Zero” is not just a fluffy footprint on a forest, but an attack on their wallet and their lifestyle. It’s a great credit to GWPF and NetZeroWatch in the UK for turning this phrase back against the infinitely well funded financial-house-and-government-alliance. Net zero has become unhelpful slogan, says outgoing head of UK climate watchdogThe Guardian (of the ruling class) The concept of “net zero” has become a political slogan used to start a “dangerous” culture war over the climate, and may be better dropped, the outgoing head of the UK’s climate watchdog has warned. “Net zero has definitely become a slogan that I feel occasionally is now unhelpful, because it’s so associated with the campaigns against it,” he said. “That wasn’t something I expected.” Politicians on all sides are now wary of associating themselves with the term, he said, which was inhibiting progress. The scare stories worked. Look at the backpedaling on meat and flying: Tackling the climate crisis has been presented as a massive change, but Stark was at pains to point out that it would not be. “The world that we’ll have in 2050 is extremely similar to the one we have now. We will still be flying, we’ll still be eating meat, we will still be warming our homes, just heating them differently,” he said. “The lifestyle change that goes with this is not enormous at all.” Now they are trying to tell us it will be easy to change the weather? This is part of the big backdown we’ve seen in so many theatres. First ESG became a dirty word, now “Net Zero” is too. Money is fleeing from “sustainable investments”. EV’s are on the nose. It’s the end of an era that started (as best as I can tell) around 2015 and the Paris agreement and has been hammered for the last five years. But make no mistake, the freeloading barnacles of bureaucracy and banking will transmogrify, and they will hide their intent a little more carefully. In Australia, after the Carbon Tax became a dirty term (when Tony Abbott won in a landslide) the tax returned under many guises, but it was never called a Carbon Tax again. Among many names, it became The SafeGuard Mechanism, where an emissions trading scheme was legislated unbenownst to nearly everyone. Now they want to impose a fossil fuel tax. They are barely hiding it. After years of failure at UN junkets to set up international emission trading schemes, which also got themselves a bad name, the EU has set up a Carbon Border Adjustment Mechanism. These carbon tariffs will take money from foreign countries to make their own emissions trading scheme workable. And just like all the other taxes, the media sycophants tell us we will “prosper” with an international carbon price. (Just as soon as we figure out how to smelt steel with solar panels.) Long live Elon Musk |

||||

|

Copyright © 2024 JoNova - All Rights Reserved |

||||

Recent Comments